Does fiber enable 'greener' data centers?

by Meghan Fuller Hanna

ugust, the U.S. Environmental Protection Agency (EPA; www.epa.gov) released a report to Congress on server and data center energy efficiency, touching off a wave of discussion on the subject. The majority of data centers still employ at least 50% copper cabling. Some industry insiders believe that the move toward "green" operations will combine with price/performance advantages to make 10 Gbits/sec the tipping point for more fiber in data center applications.

According to the EPA, server and data center energy use in the U.S. accounted for about 61 billion kilowatt-hours (kWh) in 2006, or roughly 1.5% of the total U.S. electricity consumption. That's the equivalent electricity use of approximately 5.8 million average U.S. households, says the report. Put another way, the country's data centers use more electricity than all of its color television sets combined.

The report also highlights an alarming trend: The energy use of U.S. servers and data centers in 2006 was more than double the electricity used for this purpose in 2000. Under current trends, energy use could double again by 2011 to more than 100 billion kWh, for a total annual cost of $7.4 billion. The EPA estimates that the peak load on the power grid from U.S. data centers is approximately 7 GW; if current trends continue, demand could reach 12 GW by 2011, which would require an additional 10 power plants.

Today's data centers feature hundreds of servers and storage devices, and further growth is anticipated as data processing, storage, and communications needs increase. But "what's really, really pushing this is the advent of blade servers," explains Kam Patel, technical director at ADC (www.adc.com), "which take very little space." For example, a single rack that might have housed 21 traditional, 2U servers now can hold anywhere from 100 to 150 blade servers in the same footprint.

Blade servers are particularly well suited to virtualization, which enables data center managers to reduce the number of servers and other equipment in the center. Typically, if an enterprise is running 14 applications, 14 different servers would be used to support those applications, plus additional servers for redundancy. "By virtualizing [your server], you can throw many applications on top of the virtual server and dedicate how much of the computing power you want to go toward each application," says Patel. "By doing those things -- virtualization and using blade servers -- we can reduce the number of servers that are within the data center."

But the blade server has its disadvantages too. Though it may seem counterintuitive, blade servers contribute to the overall energy conundrum because the typical data center -- even one built just 5 years ago -- was not designed to handle the requisite power and cooling needs. "They were built on a 30-to-50-W per square foot basis," Patel recalls. "In order to run them efficiently, blade servers require anywhere from 100 to 150 W per square foot. So that's why there's such a massive rebuild going on right now: to take care of the power crunch and the cooling that is required."

The power and cooling conundrum

So how can fiber help alleviate this crunch? Most data centers are designed on the raised floor principal: The raised floor acts as a plenum area in which cold air is circulated from perforated tiles in the floor and then pulled into the networking equipment to cool it. "One of the things that putting more fiber into the data center allows you to do is remove the media from underneath," says Patel. "Typically, copper cables are fairly weighty, so they are put under floor. This actually creates what's known as an air dam; it restricts the flow of air within the floor tile." (See "Stay Out of Trouble with TIA 942" for more information.)

All sources interviewed for this story note that data center managers need to deploy a significant amount of copper cable in their pathways and spaces, racks, and vertical managers to get the equivalent capacity of fiber. For example, says Doug Coleman, manager of technology and standards at Corning Cable Systems (www.corningcablesystems.com), "Within a 0.7-in. optical cable, I can get 216 fibers that would do 108 10-gig circuits. Within that same effective area, I can only get two augmented cables of copper." Moreover, he notes, a 200-ft length of augmented Cat6 cable, used to serve 108 circuits, weighs roughly 1,000 lb; at the same length, a 216-fiber cable weighs just 40 lb.

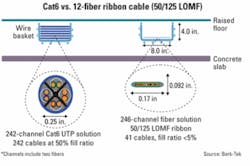

Put another way, Lisa Huff, data center applications engineer at Berk-Tek (www.berktek.com), offers a comparison of a 12-fiber ribbon cable versus Cat6 cable, which, she notes, is smaller than the 10-gig copper cable (see Fig. 1). The difference is "an order of magnitude in your wire basket," says Huff. "In other words, you can get equivalent channel capacity with fiber for less than 5% of the fill ratio of the tray, which means you're not blocking your airflow and you're saving space."

As Patel notes, the relatively lighter-weight fiber facilitates the use of overhead fiber-optic raceways (see photo above). "By using this raceway, you can remove cabling from under floor, which allows the passive cooling to occur much more efficiently."

It turns out that cooling is a very big deal. According to the EPA's report, the power and cooling infrastructure that supports IT equipment in the data center accounts for 50% of the total energy consumption of the data center. And analyst firm IDC (www.idc.com) estimates that by 2010, every $1 spent on new server equipment will require $0.71 for power and cooling.

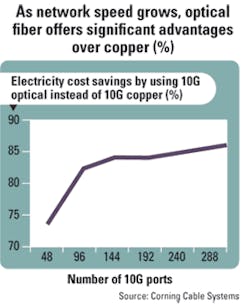

As more data centers implement blade servers, more power is consumed, more heat is generated, and cooling needs increase -- but fiber can help alleviate some of this stress, says Coleman. Using a metric from the EPA that states that for each kilowatt of power used to drive the network electronics, 1 kW of power is required for cooling, Corning Cable Systems has calculated the energy consumption of copper versus fiber as a function of 10-Gbit/sec electronics (see Fig. 2). "At 288 ports, if you look at the network energy as well as the energy consumed to drive the cooling electronics, we're showing an overall 86% efficiency in energy with 10-gig optical compared to copper," notes Coleman. "That's huge."

The 10G tipping point

When asked about the percentage of fiber versus copper currently deployed in U.S. data centers, sources interviewed here suggest ratios that run the gamut from 90:10 copper/fiber to 50:50 copper/fiber. (All agree that the SAN portion of the data center, with its reliance on the Fibre Channel protocol, is almost all fiber at this point.)

"It's really hard to justify putting one optical port in over a copper port -- at the gigabit level, for sure -- because they are so cheap for copper," says Huff, who notes that the majority of enterprises today are still running at the gigabit level. "But at 10 gig, that might be the tipping point."

IEEE 802.3an has been ratified for 10 Gbits/sec over copper, and while copper cabling and server NICs are available, there are no 10-Gbit/sec copper switches on the market yet. "If you need 10 gig today and you're going any kind of distance, you essentially do need fiber," Patel confirms.

Today, most 10-Gbit/sec connections are found in data centers that support large financial institutions, for example, but that situation will likely change as more IT managers are introduced to fiber's economic and green benefits. "Fiber, in some ways, has always been disadvantaged at the network level because it's always been positioned as a premium-priced solution," muses Coleman. "But we believe at 10 Gig and beyond, the pricing of 10-gig optics is going to offer low capex as well as lower operating expenses."

Coleman reports that the use of SFP+ transceivers for 10GBase-SR applications, expected this year, will also result in energy and cost efficiency versus 10GBase-T electronics. The SFP+ multisource agreement (MSA) specifies a maximum power rating of 1 W, but Coleman says the actual, typical power consumption is only about 0.5 W. Compare this with a 10GBase-T implementation, which requires 10 to 15 W to drive the electronics at the PHY level -- and only for distances out to 100 m. The SFP+ implementation, by contrast, supports distances out to 300 m.

Moreover, notes Coleman, the SFP+ form factor supports up to 48 ports on a line card. Forty-eight multiplied by 0.5 W per port results in 24 W required to drive a line card. Typical line cards allocate around 60 W, says Coleman, "so we are well within that 60-W budget." The 10GBase-T implementation requires 10 to 15 W per port. Divide the 60-W budget by 10 W per port, and you are left with six ports that can be supported on a 10-Gbit/sec copper-based line card.

"To get the same aggregate bandwidth capabilities [as the optical implementation], I have to add multiple 10-gig copper line cards," Coleman explains. "So it's going to take additional power not only to drive those per port, but it's also going to take the power required to drive the line cards."

Coleman also suggests comparing network electronics not only at the switch level but also at the interface level. Server interface or adapter cards draw power from the PCI bus on the server, which typically provides about 25 W. Anything beyond 25 W requires an external power supply. Coleman says Ethernet adapter cards on the market today consume just under 25 W and support maximum transmission distances of about 30 m. Longer distances would require more power, which, in turn, would necessitate the use of an additional external power supply. "But with fiber on this NIC card for 10GBase-SR," says Coleman, "power levels there are around 9 W or less. That's a big issue these days with servers and the amount of power they consume. Anything you can do to reduce the amount of power being used by the servers, for all applications, is a good thing," he contends.

In summary, the sources interviewed for this story believe that deploying optical fiber in the data center will reduce energy consumption, increase cooling efficiency, and improve overall rack and floor space utilization within the facility. And at 10 Gbits/sec, it may prove more economical than copper.

Fiber looks to play an even larger role in the data center when enterprises migrate to speeds above 10G in the future. "When you look at what the IEEE is doing, they are looking to service 40 Gbits/sec and 100 [Gbits/sec] as the next speeds -- 40 to the servers and 100 in the core," says Patel. "And both of those are being looked at over fiber right now, multimode and singlemode. That will lend itself to more people thinking, 'Okay, 10G is available today, and I know I want to get to 40 and 100. So what should my infrastructure look like?'" he muses.

Stay out of trouble with TIA 942

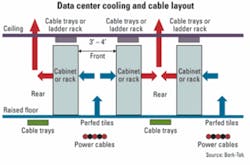

One way in which enterprises can reduce their energy consumption is to follow the hot aisle/cold aisle recommendation detailed in the Telecommunications Industry Association's (www.tia.org) TIA 942 Telecommunications Infrastructure Standards for Data Centers. Though it was released in 2005, "there are a lot of existing data centers out there that don't employ these practices," notes Lisa Huff, data center applications engineer at Berk-Tek (www.berktek.com).

TIA 942 is designed to facilitate airflow within the data center and dissipate the amount of heat generated by concentrated clusters of equipment.

Here's how it works: Racks of servers and other equipment should be arranged in alternating patterns of "hot aisles" and "cold aisles." The cold aisle features racks of equipment placed face to face, while in the hot aisle server racks are placed back to back.

Cold air is expelled from perforated tiles in the raised floor in front of the networking equipment, which typically feature fans that will suck the cold air in to cool the electronics. The hot air is then evacuated out the back of the server, into the hot aisle, where it is then sucked into the ventilation system in the ceiling.

In short, says Huff, "The TIA specification will keep you out of trouble. If you have a new data center you're putting in, it's really critical -- really, really critical -- to do a hot aisle/cold aisle design."