Switch-farm architecture overcomes backbone bottlenecks

By concentrating all network switches in one location, workgroup application servers are connected directly to the switches servicing their users.

Daniel Harman, 3M

Switch-farm networking architecture, which takes advantage of a collapsed fiber backbone, can dramatically improve network performance by overcoming backbone bottlenecks. The innovation of server farms has had the effect of funneling nearly all traffic through the network core where it bumps up against backbone bandwidth limitations that commonly reduce a 100-Mbit/ sec port to a real capacity of no more than 10 Mbits/sec.

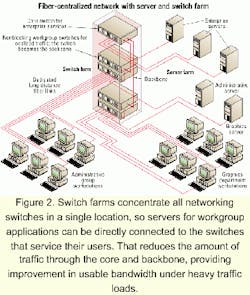

Switch farms address this problem by concentrating all networking switches in a single location so that servers for workgroup applications can be directly connected to the switches that service their users. That can dramatically reduce the amount of traffic through the core and backbone, often providing a two- to 10-fold improvement in usable bandwidth under heavy traffic loads.

Backbone bottlenecks have arisen as a result of the historical development of local area networks. In the early to mid 1980s, Novell (Provo, UT) and 3Com Corp. (Santa Clara, CA) helped to develop a mass market for networking. Novell built a low-cost operating system and freely licensed the NE1000 chipset design to manufacturers like 3Com that built low-cost, PC-based network adapters. These early networks were designed around the principle that 80% of a workgroup's traffic should remain within the workgroup and only 20% of the traffic should flow between workgroups. Building space plans were often organized to provide each member of the workgroup with a short enough path to the network hub or switch.

In the late 1980s, the implementation of enterprise services such as e-mail increased the movement of traffic over the backbone. Initially, the servers used to provide these services were often located in the workgroup's telecommunications closet. Over time, however, organizations began to develop more and more enterprise and workgroup applications such as enterprise resource planning, time and attendance applications, data warehousing, and Internet access. This proliferation of servers led to major problems in providing the required management services and security at the department level.

So most organizations moved their servers to the core in a configuration that has become known as server farms. Concentrating servers in a central location has the advantages of substantially reducing the administration workload and making it possible to provide a secure, controlled environment for mission-critical applications. This approach increased the proportion of traffic moving over the backbones and has become one of the driving factors for higher backbone bit rates, especially in larger organizations.

To accommodate this shift toward server farms, manufacturers began developing product lines designed to accommodate the multitiered approach that server farms have required. One reason for the multitiered approach is that with a typical copper distributed network, no end user can be more than 100 m from the nearest switch.

These products have become segmented into high-end core switches and low-end workgroup or edge switches. Core switches usually have a nonblocking architecture, which means the overall traffic that can be accommodated by the switch is equal to or greater than the sum of the bandwidth provided by all of the ports. They are usually chassis-based in an effort to switch all core traffic onto a single switching fabric. They generally have a very complete feature set and very-high-quality service. They also have the highest cost per port of any network switch.Workgroup or edge switches, on the other hand, are less expensive and provide a lower level of performance. Typically, they have a blocking architecture, which means a 24-port, 100-Mbit/sec switch might have a total switching capacity of only 1.5 Gbits/sec. Although many manufacturers advertise nonblocking architecture, that is only true on models with the lowest port counts, while the higher-density models that include gigabit uplinks and stacking ports can be oversubscribed by a factor of three or more. Part of the reason network switch manufacturers don't face much pressure to move to a nonblocking architecture is that the restrictions at the backbone level are even more severe. That's because nearly all workgroup switches have a total capacity greater than the common gigabit uplink. With 80 users on a switch and gigabit uplink, each user is limited to about 12 Mbits/sec of bandwidth (see Figure 1).

Although switch manufacturers have plans for more capable core switches, that doesn't address the true bottleneck in many networks-the backbone link. Since it is critical for backbones to be standardized, individual manufacturers can't rapidly innovate new and faster backbones. They are in fact standardized by the IEEE committees using fixed bit rates: 10, 100, 1,000, and 10,000 Mbits/sec. The essential problem is that these limitations in backbone bandwidth have run head-on into several trends that have substantially increased backbone traffic.

First of all, the move toward server farms has the effect of concentrating network traffic at the core. With servers for both enterprise and workgroup applications in one location, there is no option but to connect them to the core switches. The possibility of connecting these servers to workgroup switches is eliminated by the fact that the workgroup switches must be located far from the core to accommodate the 100-m link-length limitation of copper cabling. In effect, server farms have flipped the 80/20 rule on its head. Now, 80% of the traffic moves over the backbone, while only 20% or even less remains within a workgroup.

The concentration of traffic at the core comes at the worst possible moment-just when new applications such as higher-bandwidth voice and videoconferencing are requiring rapidly increasing bandwidth. Some of these demands are being generated by ever-increasing Internet and wide-area links and even the proliferation of new networked appliances such as Internet Protocol (IP) telephones. Although IP telephony doesn't consume very much bandwidth, it does require quality of service. Other bandwidth demands come from heating and environmental control systems, machine tools, and even refrigerators and microwaves that are all becoming Ethernet devices. The overall result is the congestion that many large networks are experiencing today.

Fiber offers a prime solution to this switch-farm architecture problem in which the switches are all located at the core, making it possible for workgroup applications to be connected to the switches that serve the specific people using them. This architecture requires a collapsed backbone architecture in which cables make direct homeruns from the switch farm to each individual user. This approach, of course, is not an option with conventional copper networks because of the 100-m bandwidth limitation. However, link lengths of up to 5 km (1 Gbit) can be achieved with fiber cabling, making it possible to collapse the backbone in virtually any installation. It then becomes possible to eliminate the edge devices altogether and give users direct access to the core environment. In essence, each user has a dedicated private backbone to a feature-rich core environment.To actually reduce the amount of traffic moving over the backbone links between switches, only enterprise services should be plugged directly into the core switch. Enterprise services are those that are routinely accessed by all members of an organization and represent services such as e-mail and Internet access. Group-specific services, however, should not be plugged into the core switch but instead should be plugged into the same switches as the people using those services. Those workgroup switches reside in the very same racks as the core switch. For example, members of the accounting department should be plugged into the same switch as the server where their accounting system resides. This, in effect, isolates group-specific traffic within a switch while preserving the backbone link for global traffic. It also does so without breaking up the server farm, so long as the server farm is within cable range of the switch farm (see Figure 2).

A critical requirement for this new architecture is the use of optical-fiber cabling, which is needed to deliver the link lengths required to connect users directly to the switch farm. That is primarily because fiber-optic cable is not bound by copper's 100-m limitation. In this new design, one or more high-fiber-count cables replace a single fiber-optic uplink to the telecommunications room with dedicated fiber connections to each workstation. In other words, switch ports that might normally be distributed throughout telecommunications rooms on various floors are now centralized into a switch farm within the main equipment room.

At one time, the high cost and installation time of fiber cabling would have been a serious obstacle to implementing this new type of architecture. In recent years, new technologies in fiber have brought the cost of passive components nearly in line with those of copper. The cost of active fiber-optic components, particularly fiber-network interface cards (NICs) and switch ports, has remained higher than copper cabling. But the move to a switch farm with a collapsed backbone can deliver overall cost savings by shrinking, and even eliminating, telecommunications rooms and offering more on-demand bandwidth for a lower cost per megabit.

A new and slightly different type of switch should be utilized in this environment. It is a cross between a core switch and workgroup switch. It has to have a nonblocking architecture, since it now represents the real network backbone. It must be reconfigurable so it can act as a core or a workgroup switch. It should be fully managed, offering an advanced feature set, including quality of service, and of course, it will need lots of fiber ports. It should be cheaper than the traditional core switch, yet more capable than the typical edge switch.

An example of this new type of switch has 48 100-Mbit/sec fiber ports plus gigabit uplinks for local core connectivity. The switch's modularity provides a multiple of interface configurations, offering exceptional flexibility. The modules that plug into the switch are available at speeds of 10 Mbits/sec, 100 Mbits/sec, and 1 Gbit/sec. The connector interfaces available are VF-45, LC, RJ-45, and SC, which ensures connectivity to existing infrastructure or hardware.

Fiber offers a number of other advantages in providing core-to-desktop connections. First, fiber performs at a very wide range of bit rates, currently up to 10 Gbits/sec on a single wavelength. All of these bit rates will operate on a single cable type (e.g., 62.5-micron cable) as well as various multimode and singlemode cables. Fiber uses a simple duplex transceiver to transmit and receive. To achieve a faster bit rate, the transceiver is simply run faster. In comparison, transmission schemes such as gigabit-over-copper require compression codices, multiplexed transmission over multiple twisted-pairs, and higher-frequency cabling like Category 5E or Category 6.

The bottom line is multimode fiber supports 50 times the bandwidth of the best Category 5 cabling. This capability guarantees support for future high-bandwidth applications that would otherwise require cabling upgrades to operate with full functionality. By contrast, existing copper standards will most likely require replacement within five years to support these new applications.

The Tolly Group (Manasquan, NJ) recently projected the costs associated with installing a networking infrastructure in two buildings using two entirely different approaches: a distributed architecture with copper horizontals and centralized architecture with fiber horizontals. A key fact is that only two telecommunications rooms are required in the 60,000-sq ft building with the centralized approach compared to five with the distributed cabling infrastructure (see Figure 3).The study concludes that a copper unshielded twisted-pair cable infrastructure, based on a traditional distributed model, incurs an average per-user cost of $962.76 for Category 5E cabling and $972.85 for Category 6 cabling. These costs include horizontal hardware, telecom rooms, risers, and the main equipment room. By contrast, a fiber-optic infrastructure, based on a centralized model with two telecom rooms and a main equipment room, costs only $806.80 per user, which translates to an aggregate cost savings of $40,000 in hardware costs alone.

Switch farms also reduce electronics costs by concentrating all of the unused ports in a network into a single location. Typically, a distributed copper network has wasted switch ports in every intermediate closet with an overall unused port count as high as 30-40%. A centralized fiber network, on the other hand, can reduce unused port count as low as 5%-10%.

By concentrating network ports in a single location, switch farms also reduce the time required to make configuration changes, including adds, moves, and deletes. Giving workstations direct access to the central closet also makes it easier to match users to services across switch backplanes, making it possible to distribute bandwidth precisely where it is needed.

The perception that fiber is difficult to work with is fading away. New industry-standard VF-45 connectors have the look and feel of conventional RJ-45 connectors and can be terminated in the same or less time.

A key factor driving the movement toward the VF-45 interface is low cost and high density. Additionally, a complete line of networking products is available to support the interface, including cabling, outlet products, reference cables, patch panels, patch cords, sockets, tools, NICs, hubs, workgroup and core switches, and media converters.

Of course, fiber has a well-known immunity to electromagnetic radiation, meaning it can be run alongside power cables. As more and more cabling is installed, physical size becomes ever more important. Six workstations can be connected with a fiber cable that has the same diameter as a single Category 5E cable.

Buildings that have restricted pathways for network cabling will find installation and expansion using fiber cabling easier and less expensive. In addition, the advent of the small-form-factor fiber connectors and transceivers means fiber switches can now have the same port density as copper switches.

All in all, the switch-farm architecture can dramatically improve networking performance while, at the same time, reduce costs. The basic concept is taking the load off the backbone, which serves as the bottleneck in nearly all medium and large networking installations, by connecting servers directly to their users' switches.

The essential difference is the backbone resides inside of switches and locally between switches in the same room. The bottom line is a dramatic improvement in the most important measurement of performance-bandwidth that is actually available at the user's desktop.

Daniel Harman is an applications engineer at 3M (Austin, TX).