Meeting demand for 10 Gbits in the corporate data center

Corporate data centers today support multiple 100-Mbit/sec desktop connections by interconnecting the traffic over gigabit links. As gigabit connectivity to the desktop becomes more pervasive, 10-Gbit/sec optical technologies will migrate from the enterprise backbone to server, storage, and workgroup switch applications. These short-reach data-center applications will require small-form-factor optical-transceiver modules that consume less power and are lower in cost.

The first 10-Gbit/sec enterprise switching technologies appeared in 2001. These one- or two-port line cards are designed for switch-to-switch interconnects in large, bladed enterprise switches. Making hundreds of 100-Mbit/sec and dozens of 1-Gbit/sec connections, these switches serve desktops, workgroup switches, and servers. The aggregated interswitch bandwidth requirements easily top 10 Gbits/sec and often connect multiple campuses, driving the need for link distances between 2 m and 40 km today and likely evolving to 80 km in the future. The optical technology required for these extended reaches today includes cooled lasers and power dissipation in excess of 7 W.

With capability to support extended reaches, XENPAK optical-transceiver modules are the standard for these 10-Gbit/sec backbone applications. The XENPAK multisource agreement (MSA) specifies a hot-swappable module architecture that can handle power dissipation up to 11 W in typical line-card air-cooling environments. MSAs define the mechanical dimensions, mounting, electrical interfaces, maximum power dissipation and power supplies for optical modules. These agreements allow interoperability between vendors in a system design, reducing risk for the designer and speeding time-to-market for new solutions.

Data centers connected at gigabit speeds support 100-Mbit/sec connections to the desktop, but several trends indicate that gigabit connectivity to the desktop may start to become more pervasive.

Gigabit network interface cards (NICs) have approached the price of 10/100-Mbit/sec NICs, providing gigabit speeds at no additional system cost. As a result, gigabit capability is being built into desktop computers as a standard feature. While many corporate LANs are not set up to supply gigabit access to those desktop adapters, historically the lag time has been short. There is typically only a one-to-two-year delay between the time desktop adapters reach new speeds and the time a switch and networking infrastructure is upgraded to run at the higher speed.

Recent third-party tests published by 8wire.com1 indicate that gigabit at the desktop can significantly boost application performance, even in standard business applications. In testing a gigabit-over-copper solution, Competitive Systems Analysis measured as much as 47% performance gains over traditional 10/100-Mbit/sec deployment.2

As gigabit to the desktop rolls out, bandwidth demands will increase to 10 Gbits/sec in servers and storage arrays in the corporate data center. Server NICs, storage host bus adapters, Fibre Channel switches, redundant arrays of independent disks (RAID), and array external connections are all data-center applications moving into the 10-Gbit/sec realm.

As bandwidth demands increase in server farms and storage arrays, server bus architectures will migrate from PCI-X to PCI Express. This high-speed bus architecture will enable data transfers at 2.5 Gbits/sec per lane. Four-lane solutions in 2003 will have 5-Gbit/sec full-duplex capability, and eight-lane solutions in 2004 will support 10 Gbits/sec. In this same time frame, double-data-rate PCI-X will support full-duplex bandwidth of 8 Gbits/sec. And 10-Gbit/sec Ethernet NICs will connect servers with these high-speed bus architectures to LAN switches, ensuring data can get from servers to the desktop without running into bottlenecks.

On the other side of the server, SANs will provide increased flexibility, reliability, and manageability for data storage in the enterprise data center. The 10-Gbit/sec Fibre Channel (FC) host bus adapters will connect PCI Express-equipped servers to RAID arrays through 10-Gbit/sec FC switches.

All these data-center applications will require new types of optical-transceiver modules with similar features:

- Small size. These modules must fit on PCI cards and in high-density Ethernet and FC stackable switches.

- Low power dissipation. Power dissipation must be low enough to allow cooling in dense, low airflow applications such as servers.

- Low cost. The price per gigabit of data transfer must approach parity with 1- and 2-Gbit/sec solutions to allow 10 Gbits/sec to move into high-volume applications.

- Short reach. All the applications discussed can be served by links that are 10 km or less. According to a survey prepared for the IEEE 802.3 HSSG Fiber Optic Cabling Ad Hoc3 by CDT, 78% of horizontal cabling runs in enterprise networks are shorter than 65 m.

At 10 Gbits/sec, multimode optical solutions will reach only 65 m over the 50-mm core fiber that is most common for new installations in the data center. Generally, singlemode fiber is used for building-to-building runs, while multimode fiber is ubiquitous inside buildings. That raises the question, are multimode solutions desirable? The answer is yes for the following reasons:

- A distance of 65 m is sufficient to cover 78% of data-center applications.

- Multimode optics is inherently less expensive due to the simpler plastic and epoxy-based alignment technology feasible with larger fiber geometries.

- Vertical-cavity surface-emitting lasers (VCSELs) at 850 nm produce multimode solutions with total power dissipation that is almost three-fourths of a watt lower than that required for singlemode solutions using uncooled distributed-feedback lasers.

In longer links through in-building risers, singlemode fiber will become more important at 10-Gbit/sec data rates than it is today at 1 and 2 Gbits/sec. New high-bandwidth multimode fiber is also available, which can extend 10-Gbit/sec multimode links to 300 m in new installations.

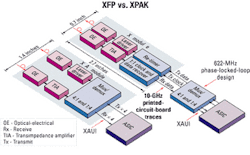

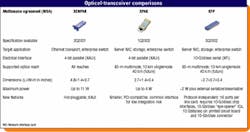

Three MSAs have been developed for 10-Gbit/sec optical modules: XENPAK, XPAK/X2, and XFP. The Table compares features and capabilities for each of the three MSAs.To use XFP modules with XAUI-based ASICs, system designers need to include a serializer/deserializer (SerDes) IC in the design to multiplex the four-lane XAUI IC interface with the serial module interface. The SerDes IC is included inside the XPAK/X2 module. As IC processes improve, Layer 2 ASICs will be developed that combine large-scale digital integration with high-speed capability, providing serial 10-Gbit/sec interfaces on the ASIC. XFP modules will interface directly with these ASICs, eliminating the cost and power of the SerDes IC.

XENPAK. Aggregated interswitch bandwidth requirements reaching 10 Gbits/sec are often needed to make connections across multiple campuses. XENPAK modules are the largest and can handle the highest power dissipation, making these devices the best choice for large, bladed enterprise switches with requirements for link distances of 40 km and higher. XENPAK was the first 10-Gbit/sec module MSA designed to allow hot-swap capability and make use of the four-lane XAUI interface specified in the IEEE 802.3ae standard for 10-Gbit/sec Ethernet links.

Each lane operates at 3.125 Gbits/sec, which simplifies signal routing on PCI cards and line cards, and eases system-level electromagnetic interference (EMI) concerns. XAUI is also the most common electrical interface on Ethernet media access controllers, FC controllers, and other Layer 2 ASICs.

The XENPAK electrical interface has 70 pins, including the XAUI high-speed interface, power supplies, and the management data input/output (MDIO) 802.3 digital interface. Choosing a module with an XAUI interface that matches the IC interface eases system design.

XPAK and X2. The XPAK MSA is the next step in MSA evolution. It borrows the 70-pin electrical interface definition from XENPAK, including the XAUI interface and hot-swap capability. That provides the system designer with the same simple robust interface to Layer 2 ICs, resulting in faster and lower-risk design. The mechanical dimensions of XPAK allow mounting on a fully compliant "short" PCI card, with eight XPAK modules on each side of a line card (16 modules on one board in a stackable switch). The X2 is another 10-Gbit/sec optical module developed shortly after XPAK, which offers similar features.

XFP. XFP is the smallest 10-Gbit/sec optical module with two ports per PCI card (16 ports per side of a line card). The XFP MSA features a completely new 30-pin electrical interface that transmits high-speed signals via XFI, a newly defined signaling method operating at 10 Gbits/sec. The XFP modules are more difficult for system designers, because routing 10-Gbit/sec signals in FR4 PC boards while maintaining signal integrity and acceptable signal leakage, or EMI, is challenging. Use of a transmit retimer, 1:1 receive clock, and clock and data recovery (CDR) circuit in the XFP can help overcome some of the signal integrity issues.

To use XFP modules with XAUI-based ASICs, system designers need to include a serializer/deserializer (SerDes) IC in the design to multiplex the four-lane XAUI IC interface with the serial module interface. The SerDes IC is included inside the XPAK/X2 module. An XFP module plus SerDes will actually consume more power than XPAK/X2 and has a higher material cost due to the CDR and retimer IC.

In reality, the price of an XFP module plus a SerDes IC is close to the price of an XPAK/X2 module. As IC processes improve, Layer 2 ASICs will be developed that combine large-scale digital integration with high-speed capability, providing serial 10-Gbit/sec interfaces on the ASIC. XFP modules will interface directly with these ASICs, eliminating the cost and power of the SerDes IC (see Figure).

Another challenge facing designers of optical modules with hot-swap capability is the need to seal EMI around the front faceplate of the module. XENPAK modules use a compliant gasket between the module faceplate and system bezel to provide this seal. The gasket must be effective over a wide compression range due to the large dimensional tolerance stackup from the 70-pin electrical connector at the back of the module through the system PC board and bezel to the module faceplate. Because the gasket must not lose effectiveness after hundreds of mate and de-mate cycles, XENPAK uses a large faceplate that overlaps with the system bezel and supports use of a large EMI gasket. The X2 module uses a similar solution.

Compliance with the PCI mechanical specification severely limits the allowable size of the module faceplate for XPAK and XFP modules. The limited faceplate size eliminates the possibility of a large overlap between the module faceplate and system bezel or PCI bracket. An alternative way to seal EMI and absorb the large tolerance buildup is needed.

Both XPAK and XFP solve this problem using a two-stage EMI seal. XPAK and XFP slide into a mounting guide (or cage) attached to the system PC board. This guide seals to the back of the system bezel or PCI bracket with a compliant EMI gasket, and the seal does not suffer repeated mate and de-mate cycles when modules are hot-swapped. The module itself then seals to the front section of the cage with a small overlap due to the tight dimensional tolerances between the module and guide or cage. This two-stage solution enables a robust EMI seal in a very small area.

Today, 10-Gbit/sec XENPAK modules offer support for extended-reach optical links for enterprise applications. As short-reach data-center applications migrate to 10 Gbits/sec, the smaller XPAK/X2 modules fit into systems with XAUI interface ASICs, while XFP modules offer higher port densities. There is a tradeoff in design complexity when XFP is paired with XAUI Layer 2 ASICs. When available, ASICs with serial 10-Gbit/sec ports will eliminate the SerDes and make XFP the low-power, low-cost solution for the future.Robert Zona is senior product marketing manager at Intel's Optical Platform Division, Optical Products Group (Santa Clara, CA).

- 8Wire.com, "Is it Time for Gigabit to the Desktop?," 9/25/2001.

- "Gigabit to the Desktop," Competitive Systems Analysis Inc., October 2001. (Commonly used mainstream applications such as client/server database tasks and messaging workloads were tested while running Microsoft SQL Server and Exchange Server.)

- "Installed Optical Fiber Cabling Distances," CDT Corp.