Network latency – how low can you go?

By STEN NORDELL

Low latency has always been an important consideration in telecom networks for voice, video, and data, but recent changes in applications within many sectors have brought low latency to the forefront of those industries. The finance industry and its algorithmic trading is a commonly quoted example of a key market for which low latency is critical.

Yet many other industries and data-handling activities such as cloud computing and video services are also now driving ever-lower latency in today’s networks. Meanwhile, as mobile operators start to roll out wireless Long Term Evolution (LTE) services, latency in the backhaul network will become increasingly important to achieve the quality required for applications like real-time gaming and streaming video.

In voice networks, latency must be low enough so the delay in speech does not cause problems with conversation. Here, the latency, which typically needs to be 50 msec or lower, is generated by the voice switches, multiplexers and transmission systems, and the copper and fiber infrastructure. Transmission systems add only a small proportion of the overall latency, and historically latency has not been a large consideration as long as it was low enough.

In data networks, low latency has been seen as an advantage but until recently was not a top priority in most cases – again, as long as the latency was not excessive enough to cause problems. A good example is Fibre Channel for SAN/storage applications, where the throughput drops rapidly once the total latency reaches the point that handshaking between the two switches is not quick enough, a phenomenon known as “droop.”

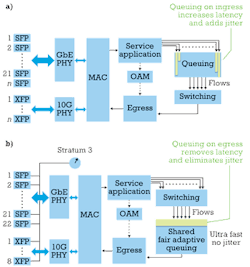

A further consideration with the move to LTE and low latency is that the telecom industry is now considering a Layer 2-based infrastructure due to the all-IP nature of LTE, whereas the earlier examples were predominately Layer 1 in the transport domain. That adds further Layer 2 processing into the backhaul network, either in two separate layers (Carrier Ethernet switches over a WDM layer) or via an integrated Layer 1 and 2 platform, with an associated increase in latency that must be managed and minimized. Integrated Layer 1 and 2 platforms, of course, are not limited to mobile backhaul.

Sources of latency

Latency in fiber-optic networks comes from three main components: (1) the fiber itself, (2) optical components, and (3) opto-electrical components.

In the realm of optical fiber, light in a vacuum travels at 3×108 m/sec, which equates to an unavoidable latency of 3.33 µsec/km of path length. Light travels more slowly in fiber due to the fiber’s refractive index. This speed reduction increases the “ideal performance” latency to about 5 µsec/km. That’s the practical lower limit of latency achievable if it were possible to remove all other sources of latency.

However, fiber is not always routed along the most direct path between two locations, and the cost of rerouting fiber can be very high. Some operators have built new low-latency fiber routes between key financial centers and employed low-latency systems to run over these links. That’s expensive and likely to be feasible only on the main algorithmic-trading routes where the willingness to pay is high enough to support the business case.

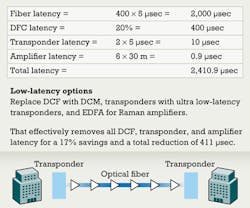

Optical components are the next latency source. The majority of the latency introduced by optical transmission systems is in the form of dispersion compensating fiber (DCF), which is only used in long-distance networks. A typical long-distance network will require DCF approximately 20–25% of the overall fiber length; therefore, this DCF adds 20–25% to the latency of the fiber. DCF could add as much as a few milliseconds of latency to long-haul links.

Recently, innovations in fiber Bragg grating (FBG) technology have enabled the development of the dispersion compensation module (DCM). A DCM also compensates for the dispersion over a longer reach network but does not use a long spool of fiber and thus effectively removes all the additional latency that DCF-based networks impose.

The other key optical component to consider is the optical amplifier. EDFAs enable WDM systems to work, since they amplify the complete optical spectrum and remove the need to amplify each individual channel separately. They also remove the requirement for optical-electrical-optical (OEO) conversion, which is highly beneficial from a low-latency perspective.

However, such amplifiers contain a few tens of meters of erbium-doped optical fiber that must be considered if an operator is looking to drive every possible source of latency out of a system. On a per amplifier basis, this latency is very small. But a long-haul system will have many amplifiers, which together create additional latency. One approach to address this additional latency is to use Raman amplifiers instead. Raman amplifiers use a different optical characteristic to amplify the optical signal.

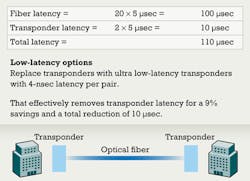

Finally, there are the effects from opto-electrical components. The latency of both transponders and muxponders varies depending on design, formatting type, etc. Muxponders typically operate in the 5–10-µsec range per unit. Forward error correction (FEC), if used for long-distance systems, will increase the latency due to the extra processing.

Transponders also can vary greatly in latency depending on design and functionality. The more complex transponders include functionality such as in-band management channels. This complexity forces the unit design and latency to be very similar to a muxponder, in the 5–10-µsec region. Again, if FEC is used then the associated latency can be even higher.

Some vendors also have options for simpler, and often lower-cost, transponders that do not have FEC or in-band management channels. These modules can operate at much lower latencies – as low as a few nanoseconds, which is equivalent to adding only 1 or 2 m of fiber to a route. The result can be as much as 1,000X better latency performance than more complex transponders.

Integrated Layer 1, 2 technologies

Some networks are now using integrated Layer 1 and 2 technologies, which are often referred to as packet-optical transport systems. A low-latency Carrier Ethernet switch will have latency on the order of 5 µsec or more, in addition to any necessary transport-system latency. Recent innovations in this space are optimized for aggregation and transport of Ethernet traffic. These platforms can use the most recent switch architectures such as the Output Buffer Queued architecture (see Figure 1). Use of such advanced switch architectures, in combined packet-optical solutions, brings the latency of these units significantly lower than traditional designs – at <2 µsec, without any additional transport-system latency.

That might not seem significant when compared to the 1,000X improvement claimed for the Layer 1 transponders, but there is an important network architecture angle to consider here, too. In Layer 1, architectures are typically point-to-point wavelengths with a transponder/muxponder at each end and additional optical components in between. In Layer 2 architectures, there is typically a network of Layer 2-capable devices through which the traffic moves from one to the next until it reaches its destination. For a mobile backhaul network, that could be four or five devices into the core and the same for the return route, or the number could be much higher depending on the network architecture.

Operator options

So with a toolkit of low-latency options, what should an operator do when looking to provide a low-latency service? It’s clear that the fiber route has by far the biggest impact on latency, and if the operator has two routing options, then the shortest is better. The next most significant impact an operator can make for long-distance networks is to use DCM-based dispersion compensation rather than DCF; this change could reduce the latency by up to 20%.

To drive latency lower in short-haul and long-haul networks, the operator also should use an optical transport platform that offers ultra low-latency transponders. They can reduce the latency associated with OEO conversion from milliseconds to nanoseconds. The use of such platforms has an effect similar to shaving off 1 or 2 km from the route distance.

Finally, for those that really want to go as low as possible, they can also remove the small amount of remaining latency within the optical amplifiers by swapping them from EDFA to Raman amplifiers. Figures 2 and 3 show these latency-reducing strategies in action.

The lowest of lows

Low latency is a real concern in many network scenarios. Some, such as telecom services to the financial services industry, can command a substantial pricing premium if the service can provide the end customer with a low-latency advantage. Other industry sectors, such as data-center interconnect, will require more of a focus on low latency as a basic feature to ensure the facility owner is strongly positioned to serve customers with low-latency demands.

There are limits to how low latency can go until we can change the laws of physics of light in fiber. But there is a lot a network operator can do to ensure that the latency on any route is as low as physically possible with integrated Layer 1 and Layer 2 transport strategies.

STEN NORDELL is chief technology officer at DWDM and packet-transport systems vendor Transmode.