Get the most from 10-Gbps to 40/100-Gbps network migration

by Olivier Jerphagnon

Overview

Experience suggests that the next wave of network migration will bring its share of challenges and surprises. New breakthroughs such as in-service monitoring offer a means to address them.

As network operators migrate to a converged infrastructure, they face the additional impacts of a deepening economic recession: tight capital markets, frugal customers, and the need to leverage all assets. Meanwhile, bandwidth requirements continue to ratchet upwards to fuel our changing work habits (videoconferencing, teleworking, etc.) and social activities (HDTV, movies on demand, social networks, etc.). The bottom line remains constant: Decrease cost per bit and prepare for new bandwidth-hungry services.

Market and economic pressures have spurred the offering of higher-speed service bundles in access markets and the mandatory deployment of 40-Gbps pipes in the core, as well as frenzied activities in 40/100 Gbps by standards bodies. How can we best prepare for the new migration challenges, yet act conservatively by applying lessons learned in the past?

The network planner's conundrum

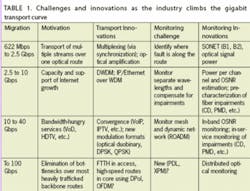

Network designers face the challenge of how to carry a 40-Gbps signal on a wavelength engineered for 10 Gbps to leverage the network infrastructure in place. They likely will solve this problem through the use of technology that reduces the spectral bandwidth passing through a cascade of filters, and by mitigating existing network impairments with innovative modulation formats (for example, duobinary and DPSK). However, network impairments make such 40-Gbps rollouts particularly difficult because the impairments are not well documented and their effects tend to vary. Residual chromatic dispersion (CD), poor/fluctuating polarization mode dispersion (PMD), and higher sensitivity to optical signal-to-noise ratio (OSNR) are typical challenges facing 40-Gbps system designers and field technicians.

New 100-Gbps formats such as dual-polarization QPSK follow this trend as well, but they depart fundamentally from past designs by requiring a clean-slate approach to circumvent known network impairments, i.e., utilizing coherent detection schemes and polarization-sensitive transmission. What the past tells us is that every time a new type of network is deployed, every time a new higher-speed signal is transmitted, surprises emerge and we must learn to deal with them (see Table 1).

I remember a colleague being called when the first 10-Gbps signals were tested on long-haul links. Field technicians looked haggardly at the test equipment screen as it reported two optical pulses instead of one; was the second one real or a “ghost” due to an artifact of the measurement equipment?

It turned out that both pulses were very real, and network operators had to quickly learn the importance of PMD, which had not needed to be controlled in fiber rollouts up to that point. The first wide-scale 100-Gbps experiments will most certainly come with their share of surprises too, whether in the form of unknown impairments or in the shape of difficulties coping with stronger effects from known impairments that were not critical in earlier generations of equipment. These include cross-phase modulation (XPM), polarization-dependent loss (PDL), etc. One underlying problem in optical networks bubbles up in all of this: How do you manage such networks if you cannot measure what is going on?

A new way of monitoring

When networks migrated from PDH to SONET to transfer multiple digital bit streams and transport large volumes of telephone calls and data over the same optical fiber, a number of monitoring bytes were included in the header to help identify where a fault would occur on 2.5-Gbps “highways.” Once they were able to identify whether a fault occurred on that link or that specific section along a route of add/drop multiplexers (ADMs), network operators learned to rely on this type of capability. This was part of the success of SONET.

More recent innovations in optical networking like DWDM have brought tremendous capacity at a reduced cost per bit to support growth from Internet traffic. Today, reconfigurable optical ADMs (ROADMs) provide more flexibility to deal with unpredictable traffic and customer demands; channels can be added or dropped automatically without re-engineering the network. However, with the shift from packet over SONET to IP over WDM, network operators have lost SONET's insight into their core networks, which provided quality of service and simplified maintenance. They cannot merely rely on bit-error rates in the electrical domain or simple optical power measurements when an optical amplifier ages or a piece of fiber is “flaky.”

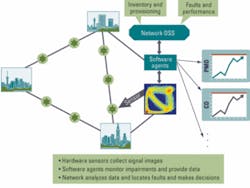

New monitoring techniques attempt to solve the problem by measuring in-situ optical impairments without being limited by the underlying optical architecture. They do so by analyzing improved eye diagrams, called “phase portraits,” and by leveraging pattern recognition technology originally developed in non-telecom fields such as medical diagnostics. New impairments at existing or future transmission rates and formats can be measured without requiring changes in the network equipment, by merely implementing a new software agent to interpret the pictures and to generate live reporting data (see Figure 1). This technique can track multiple impairments simultaneously, and it is all the more attractive in that it gives some resiliency to flag and deal with the unexpected.

Such an approach addresses the challenge of managing higher-speed networks that are more sensitive to optical impairments and involve more complicated structures. Imagine tens if not hundreds of active 10-Gbps channels in a ROADM-based network that is being upgraded to 40 Gbps or that has intermittent fiber problems. Current monitoring approaches would require multiple test boxes and would take traffic off the fiber for diagnostic measurements.

Network transport planners are now asking for in-service measurements. Their “beloved CFO,” dealing with a rapidly tightening budget, would certainly like them to use a single device that can do the entire job rather than buying multiple test boxes to measure optical impairments with obscure acronyms. And wait until he or she hears that a new impairment has emerged and more test equipment must be purchased when 100 Gbps is rolled out.

Efficiency and flexibility

Until now, test and measurement tools have relied on measurement techniques deeply rooted in the sensor hardware architecture, as in the case of an interferometer's dependence on frequency. The new techniques can interpret a single signal image with multiple software algorithms, leveraging the common hardware sensor. Since the sensor does not depend on the bit rate or modulation format, it does not need to be replaced when the network is upgraded; new software agents are simply added to help maintain and manage the new structure.

This approach is very useful in the deployment of current 40-Gbps modulation formats on existing 10-Gbps infrastructures. Fiber links and wavelength channels can be tested on live network traffic before 40-Gbps cards are added. This monitoring strategy also minimizes the amount of planning needed, thus accelerating the speed at which new 40-Gbps links can be deployed. It also reduces inventory because cards are only deployed where and when needed, thus easing cash-flow burdens. Test equipment that supports multi-impairment monitoring offers further savings, as a single test platform can replace multiple sets of expensive single-purpose test instruments.

Embedded into next-generation systems, live network health management capabilities will also create new possibilities. In-situ monitoring opens the door to improve system performance with active compensation using feedback control mechanisms, and to optimize network provisioning and utilization. Networks will be able to locate where faults occur, recover from them, trigger repair work, and update inventory.

We should expect no less. Air routes are maintained this way today, for instance; if a part fails, an alarm goes off, the plane adjusts itself and the pilot knows about it. The alarm also triggers the shipment of the right part to the maintenance hangar and the correct instructions to the technicians so when the plane lands at its destination, the repair crew is waiting, and the plane is back in service with minimal delay.

Old lessons show that the next wave of network migration will come with its share of challenges and surprises. New breakthroughs such as in-service monitoring offer a means to address them. Operators will have a much improved ability to measure, diagnose, and help their networks recover from optical impairments, making the best of their assets and continuing to squeeze value from every drop of bandwidth.

Olivier Jerphagnon is vice president of marketing and business development at mdi.

LINKS TO MORE INFORMATION

Lightwave:Is DP-QPSK the endgame for 100 Gbits/sec?

Lightwave: Is high-speed networking impervious to the downturn?

Lightwave:Industry plots PMD compensation paths at 40G, 100G