Taking stock of premises-network performance

Will the network elements on your system measure up now and in the future?

DAN SCHAEFER, Ixia

Network designers would rarely argue with the following logic: It's better to learn about the limitations of a network design before deployment, rather than find performance problems later. Indeed, as users find more uses for their premises networks, such as tuning into Internet radio stations, the additional burdens can, without warning, place the network outside its capabilities, with disastrous results.

The solution to avoid network meltdown is to invest in performance measurement equipment, which consists of specialized hardware as well as finely tuned software. Such performance measurement equipment is used to stress networks with traffic volumes and types that exceed demand so that a margin of safety can be established. Without adequate measurement equipment and testing algorithms, designers are likely to depend solely on equipment vendors' specifications, word of mouth, or magazine reviews to extrapolate overall performance.

In fact, the degree of angst that network designers suffer is generally proportional to the size of the problems the network encounters after deployment. Many networks are deployed without thorough testing, and it is the job of network designers to weigh the risks of future network problems against investments in test equipment. Fortunately, network test equipment vendors continue to improve their products while maintaining reasonable price tags.

Premises-network designers must contemplate the following performance measurements before system deployment:

- Throughput. The system must be capable of faithfully delivering packets of data to all its nodes at a reasonable rate.

- Latency. Many types of traffic are sensitive to the time it requires to deliver data to its destination. The system must be designed to provide timely delivery of data to all its targets.

- Jitter. Also known as latency variation. Given that there is latency in the delivery of data, the system must be capable of ensuring that the latency does not exceed certain limitations over time.

- Integrity. Data must not be corrupted as it traverses through the system.

- Order. Some types of applications are sensitive to the order in which packets of data are delivered. In such systems, packet order must be preserved from transmitting station to receiving station.

- Priority. In the event of congestion, the system must discard some packets of data. The network should be capable of distinguishing between packets of differing priority and, under duress, discard those with lower priority.

Because there is a constant mixture of traffic types, premises-network test equipment ideally would implement a methodology that presents all types of traffic continuously throughout its test cycle.

The first step in qualifying performance generally involves the establishment of an acceptable terminology by which all things will be measured. Armed with such knowledge, the tester can then establish a methodology that will be used to produce compliant results, which ostensibly may seem obvious, yet the implementation can be challenging. That is especially true because network technology continues to advance, and what may prove sufficient today may be obsolete tomorrow.For example, suppose the tester would like to measure the throughput of a network element. According to Internet Engineering Task Force (IETF) document RFC 1242, throughput is defined as "the maximum rate at which none of the offered frames are dropped by the device"-suggesting that to determine the throughput of a network element, the tester would need to generate and send frames to the device at higher and higher speeds until it starts dropping frames. Of course, to determine whether the network element actually dropped frames, the tester would have to look at its output ports and account for every packet put into the device. Thus, each test packet would have to be uniquely identified so that a receiving port can isolate and count it. That precludes the use of network interface cards running on PCs. Even though an operating system may be highly streamlined, it can not generate and count packets on optical networks running at gigabit-per-second speeds.

Premises-network equipment testing, therefore, requires specialized hardware with the following characteristics:

- Ability to generate-and, conversely, receive and analyze-specially marked frames at optical speed on multiple ports simultaneously.

- Synchronized transmit and receive ports to ensure accurate latency and jitter measurements.

- Ability to generate a special cyclic redundancy code over the data portion of each frame, thus highlighting any data corruption in the frame as it traverses through the network.

- Ability to place special counters in the transmit frames and look for skipped, missing, or repeated count numbers in the receive frames.

- Ability to send frames of mixed priority and schedule such frames to challenge a network device's ability to prioritize the flows.

A healthy dose of software is used to coordinate the hardware and present an environment in which to hunt down problems. The software, generally, fits into one of three categories:

- Roll your own. The tools to design every detail of every frame transmitted on every port as well as the traffic profile that the frame fits into. On the receive side, the tester specifies every type of filter necessary to capture and count the incoming frames. This type of software can be likened to a bag of building blocks, where the tester must construct each piece of the overall project, one block at a time.

- Pre-packaged. A set of software routines whose sole purpose is to perform industry-standard tests on network equipment. These software packages normally conform to a set of well-described tests and, as such, offer a great deal of consistency when comparing equipment from different vendors.

- Software libraries. Offers the opportunity to roll up your sleeves and write the routines to implement the tests. This sort of solution requires a significant commitment of resources for writing and testing the code, but it is perhaps the most flexible solution, especially for larger corporations with widely varying needs.

Simply having all the necessary pieces together does not constitute a complete solution. Network designers must decide which tests are appropriate for the network design and utilize the appropriate tools and methodology to ensure a successful implementation. Deciding on a methodology, in some respects, can be more of an art than a science. Yet, if network designers have a solid set of requirements, they can quickly focus on some basic standard tests. As further refinements in the design are implemented, the tests can be altered or rewritten as needed.

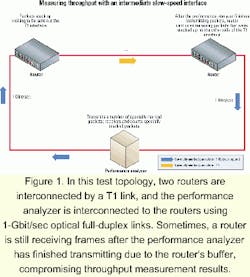

One of the simplest tests to consider is the measurement of standard throughput. A good methodology for testing equipment according to the previously mentioned RFC 1242 definition of throughput involves the process of generating a sequence of frames at a maximum frame rate and presenting these frames to a device's ports. The tester can then observe the frames as they egress the device and keep an accurate log of the number of transmitted frames versus the number of received frames. If any frames are missing, the rate is halved, and the process is repeated. If at any time during this process, all the frames get through, the next rate is chosen as half the distance between the previous iteration and the next higher iteration that failed. This binary search algorithm quickly narrows in on the final throughput. A more general approach is described in IETF document RFC 2544, yet both methods yield the same result: maximum throughput of a network element.Most test equipment vendors implement the throughput test described here, yet a network designer still needs to be aware of various considerations that become important when dealing with subtler issues. Consider the test topology in Figure 1, where two routers are interconnected by a T1 link and the tester interfaces with the routers using 1-Gbit/sec optical links. The T1 link can only pass data at 1.536 Mbits/sec, whereas the optical link can pass data well in excess of 900 Mbits/sec, depending on frame size. It seems the throughput for 64-byte frames, measured in frames per second, would accurately show up as:

1,536,000-bit/sec / (64 bytes/frame x 8 bits/byte) = 3,000 frames/sec.

Yet, the actual number may differ for several reasons. First of all, the data-link-layer information in an Ethernet frame may not be passed through the T1 interface; instead, some sort of point-to-point protocol (PPP) link may be established, essentially removing the 12 bytes of media-access-control information in the Ethernet frame and replacing it with information in the PPP header.

Secondly, there may be problems with the measurement algorithm itself. If the people testing are not careful to understand the algorithm and the nature of the router, they may witness a higher rate of throughput than that expressed by the formula above. This problem crops up because the router will store frames in a buffer, and during the course of the test, the buffer fills up. When the transmission from the test equipment stops at the end of its test, the buffer continues transporting stored frames across the T1 interface. In such instances, the throughput algorithm would need to be modified such that the actual frames being counted are embedded in a series of frames not counted. Figure 2 shows how to pack such frames while running this test.

Latency represents another basic performance measurement, yet the issues can become complex as the test evolves. RFC 1242 defines latency for bit-forwarding devices as "the time interval starting when the end of the first bit of the input frame reaches the input port and ending when the start of the first bit of the output frame is seen on the output port." Here, latency is defined with respect to the network element. The methodology re commended in RFC 2544 is as follows: First determine the maximum throughput of a network element, then transmit a continuous stream of frames at the throughput rate for 120 sec, with a "signature" frame transmitted at 60 sec. The signature frame is used to carry a transmit time stamp, which is then checked when the frame is received. The difference between the received time stamp and the transmitted time stamp is used to determine the latency. A number of separate trials is recommended; the final number is the average of the results. In general, this approach works well if the tester is trying to acquire a single number that is somewhat indicative of the network element's ability to forward frames; however, there is often more to consider when calculating latency.

Latency measurement, as described in RFC 2544, can become problematic if the signature frame gets dropped for some reason. That may cause the test equipment to wait indefinitely for the signature frame to arrive. A dropped signature frame should not occur, because the stream of frames is sent at a throughput rate that the network element can sustain. Yet, in real life, chaos has a way of sneaking into the equation, and in the event of a dropped frame, the test would be invalidated.

One way around this problem is to design a test algorithm that sends a signature frame every second, then captures all such frames. When transmission of the latency frames has concluded, the test equipment can pick out a frame at the center of its collection of captured frames and perform its latency measurement. If a signature frame is dropped, the test can still utilize another signature frame in its place. This approach increases robustness of this test.

This modified latency algorithm may not go far enough, however. Using this algorithm, the tester cannot measure latency variation or jitter. To accurately make such a measurement, testers would need to capture and analyze all frames in real time. They could then calculate minimum, maximum, and average latency over time.

To take this argument one step further, testers could put the minimum, maximum, and average latencies into a series of special buffers, switching to a new buffer periodically. That would yield latency over time variations. With such equipment, testers could profile a network element's latency behavior while other traffic patterns are run through the device, yielding a more accurate picture of the network element during high-stress conditions. Obviously, such test equipment must track latency using optimized hardware, as these types of measurements are far beyond the ability of any processor to track.

Designers of premises networks must choose their equipment carefully if they are to achieve a performance that will meet their current and future needs. Since every premises network has its own unique requirements, designers must be aware of potential problems well in advance of deployment. Thus, they need to devise and conduct a series of performance tests on all network elements as part of their pre-deployment activities. System performance is measured in terms of throughput, latency, jitter, integrity, packet order, and packet priority.

There are a number of industry-standard test methodologies available, and prudent designers must choose among them to qualify their equipment. These methodologies may not go far enough in qualifying some aspects of the network equipment, which requires that the tests be modified, accordingly.

To be of any use to designers, network test equipment must maintain enough power and flexibility. The corresponding software should facilitate the simple execution of standard methodologies as well as maintain programmability for situations that don't conform to the standard methodologies. Armed with an effective design and qualified network equipment, premises-network designers can rest assured that their system will meet current and future needs.

Dan Schaefer is a system engineering manager at Ixia (Calabasas, CA).