Automating optical-component production testing

Instruments designed specifically for production testing help increase throughput and accuracy, often at lower cost than those designed for lab testing.

Paul Meyer, Keithley Instruments Inc.

Production test equipment for optical components has lagged behind the rapid sales growth of fiber-optic communications devices. The result is the migration of laboratory test equipment into production environments. While laboratory equipment may be accurate, test solutions based on this type of gear are less than optimal. Many optical-component test stands are plagued with instrument lockups, low throughput, excess operator interaction, and transposition error. Wafer-level testing before semiconductor devices are diced into individual components also requires special consideration.

Testing systems can be developed that provide both high throughput and measurement accuracy by combining novel measurement techniques with proven production-test instruments. These solutions take advantage of automation features on instruments designed specifically for PC-controlled testing and incorporate switching systems for rapid measurements on multiple components as well as on the semiconductor wafer plane. Furthermore, these systems can be developed as scalable test solutions with different levels of automation, making production expansion more cost-effective as the market for a given component grows.

One of the most common complaints with current lab-oriented optoelectronic test equipment is frequent lockups of the internal processor or general-purpose interface bus (GPIB) card in the instrument. While restarting an instrument in a laboratory environment has minimal impact on a researcher, recovering a stalled instrument in an automated manufacturing environment is time-consuming and costly. In an automated manufacturing environment, it is necessary to not only restart the instrument but also to rearrange work-in-process (WIP) to make sure that all devices under test (DUTs) are completely screened. Depending on the complexity of the test system, this task can be complicated and prone to error. The risk of having a component miss a critical test is significant.Optoelectronic test equipment favored by researchers often lacks the raw measurement speed needed in a production environment. Much of this equipment was designed or specified by optical researchers that had a lab environment in mind. As a result, the design subtleties required for fast, low-level measurements have not been incorporated in these lab instruments. Commonly, lab instruments have only one measurement speed and level of accuracy. Besides raw speed, production instruments should be adjustable to provide the optimum tradeoff between speed and accuracy under different conditions.

In the laboratory, multiple instruments are typically used to measure multiple photodetectors. As a result, cost per channel is very high. In a production setting, a common solution for reducing cost per channel is to use an electrical multiplexer between the measurement instrument and the DUTs. This approach works well when an instrument is fast enough to acquire data and complete the measurements on a single DUT in less time than the component handler can cycle through multiple test points. It allows the multiplexer to scan several DUTs, signal sources, and measuring instruments during a given test cycle.

To withstand the rigors of 24/7 operation, optoelectronic test instruments must have a high meantime between failures (MTBF), long-term (one-year) accuracy, low temperature coefficients, and robust firmware.

All test equipment on a production line must have a high MTBF. Lower MTBF requires more spare-parts inventory and high operator and technician staffing levels. Even one instrument with a poor MTBF can substantially impact production-line viability.

Production-grade instruments typically have a calibration life of one year. Shorter calibration periods, particularly the need for frequent onsite calibrations, have a negative impact on the total uptime of a test stand. When there is no way to avoid frequent calibrations (daily or more often), this routine should be incorporated in the automation package, which ensures implementation on a timely basis without human intervention.

Although many lab environments are climate controlled, most production environments are less predictable. Therefore, production-grade instruments must have low temperature coefficients to minimize drift and ensure accurate measurements.

While an instrument's MTBF number is important, it typically does not include lockups as a failure mode. Unfortunately, frequent lockups requiring manual intervention often have a larger impact on production throughput than MTBF figures suggest. Usually, robust operation with minimal lockups depends on instrument firmware that has been thoroughly tested in the user's type of test environment.

In a production environment, a test system is designed for maximum throughput. To achieve this goal, test instruments must have programmable features that allow flexible control of the measurement sequence. A test engineer needs the flexibility to optimize system performance through measurement accuracy and throughput tradeoffs. This calls for instrument programming that controls the way noise is handled as well as how triggering is accomplished.

A production-grade instrument allows control of the integration period of the unit's analog-to-digital (A/D) converter. Setting the integration period involves a tradeoff between the time it takes to complete a measurement and the amount of line noise suppression achieved. The latter is a major determinant of measurement accuracy. For example, when the light emitted from a laser diode is at the low end of its range, the corresponding current signal from a photodiode detector can be so low that it approaches line noise levels on the signal. With proper adjustment of the instrument's integration period, the signal-to-noise ratio can be improved without excessive measurement time.

The integration period is often specified as a number of power-line cycles (NPLC). This terminology stems from the fact that the strongest electrical noise in most plants comes from the fields radiating from AC power lines. Since the A/D converter takes many samples for each measurement, setting the integration period to NPLC = 1 (or integer multiple thereof), the inducted power-line noise is effectively averaged out of the reading. The tradeoff is that it takes 1/60th of a second (16.7 msec) for a reading, when the instrument could conceivably do it in a fraction of that time. The use of good electrical grounding and shielding practices on the DUT fixture will allow a shorter integration period. The test engineer may be able to reduce the NPLC setting to a small fraction in the range of 0.1 to 0.01 and get much higher throughput.

Instead of using an integrating A/D converter (ADC), some instruments use a fixed-rate ADC and average a number of measurements. At first glance, this technique may appear to produce the same results, but it is fundamentally different in the frequency domain. With true integration in the time domain, the instrument's spectral response is a low-pass function. The longer the integration time, the lower the passband and the greater the attenuation of higher frequencies. By contrast, simple averaging of a number of fixed (usually short) sample pulses actually reduces the low- frequency noise but may enhance the noise spectrum at the ADC sampling rate (or multiples thereof). For a given time period, true integration provides more noise suppression than averaging multiple samples captured at a higher sample rate.

Many laboratory instruments do not provide control of the delay between the time when a new source current or voltage is applied to an optocomponent and when the next measurement of the corresponding output is made. Production-grade instruments allow tight control of the internal delays so that a test engineer can compensate for cable lengths, DUT response time, etc.

When robotic automation is added to a test stand for component handling, for example, cable lengths between the instruments and DUT must often be lengthened to accommodate the resulting physical dimensions. Longer cable lengths increase parasitic capacitance, resistance, and inductance of the cables. To compensate, the delay between the application, or change in a source signal, and the start of a measurement cycle on the DUT must be increased to allow adequate settling time for the DUT signal.

Programmable trigger delay allows precise control of the timing between multiple instruments. This feature often is necessary to coordinate the measurement cycles of several instruments and sources on a test stand. Many laboratory instruments do not have adequate provisions for trigger input and output, and those that do often lack programmable trigger delays. External trigger controllers can compensate for the absence of this feature, but they add hardware and software complexity.

Most of the optoelectronic lab equipment available today has some form of a PC interface to automate control and data collection. The majority of these provide GPIB or recommended standard 232 interfaces; a few have a universal serial bus or Ethernet interface. In a production test environment, it is best to use a single-instrument bus interface standard to streamline the programming and troubleshooting environment. At this juncture, GPIB is the most widely available bus and therefore is recommended for production environments.

The price and performance of IBM-compatible PCs make these computers the dominant choice for automated test executive platforms. Most test engineers select Windows NT or Windows 2000 to ensure a robust operating environment for these platforms. However, regardless of programming language and technique, this combination does not provide precision-timing control.

Many optocomponent tests require uniform timing during an I-V sweep (i.e., while applying a range of currents and measuring the resulting voltages). For instance, during a sweep of the injection current of a laser diode, many measurements hinge on uniform timing. The limited real-time capability of a PC/ Windows NT platform requires that critical timing issues be offloaded to the test instruments. Production-grade instruments allow a test sequence to be stored in internal memory and executed with precise timing at high speeds. A suitable source-measurement instrument can step through a series of voltages and currents applied to an optocomponent and make precise, critically timed measurements without the assistance of the test executive. This accelerates production testing by cutting down on GPIB traffic and frees the test executive for other tasks, such as robotic control. To facilitate interaction with other instruments, a production-grade instrument supports the standard commands for the programmable instrumentation (SCPI) trigger model.

Many automated test stands use a switch matrix to allow a single instrument to measure multiple DUTs. This instrument must have control features that facilitate cold switching (i.e., without applied voltage or current) and automatic scanning of the DUTs. These features allow rapid testing without causing premature failure of relay contacts that might result from switching under load.

In a production test environment, data management is crucial in making cost-effective disposition of the DUTs. Starting with the earliest segments of the production cycle, it is the norm to collect and archive data from key tests, which are then analyzed to determine the components that will continue as WIP stock and those that are scrapped or reworked. Production instruments are designed to facilitate this data collection and analysis without excessive involvement of the test executive.

Many lab instruments present measurement results to the GPIB one measurement at a time. A production-grade instrument allows the test engineer to select a format for the measurement data and to determine when it will be transferred to the test executive. Such instruments can be programmed to record measurement results for an entire test sequence before data is transferred. Then when a test sequence is completed, the transfer can take place in either ASCII mode or binary mode. Binary mode transfers require fewer bytes per measurement point. The overhead for requesting data is minimized by transferring all the measurement results from a test sequence in one block of data. These techniques reduce the bus traffic to a fraction of that required by a typical lab instrument.A common class of optical power meters biases a photodiode and measures the photocurrent to determine the optical power. The photodiode has a dark current that must be characterized and removed from the final measurement. Production test instruments often have math functions that can be used to accomplish this. For example, a scaling and offset math function in the form of a straight line equation (y = mx + b) might be used for that purpose based on stored values from multiple measurements. Again, this offloads that task from the test executive and cuts down on bus traffic.

In many test scenarios, a large number of measurements are made, but only the minimum and maximum values are important to the test results. For example, the polarization-dependent loss (PDL) of an optical component is determined by sweeping through the Poincaré sphere with a polarization controller and recording the optical power with a photodetector. It is too slow and costly to download hundreds of measurements and then use the test executive to find the minimum and maximum values.

In a typical minimum/maximum test scenario, an 8x1 optical multiplexer is characterized to find the PDL. The polarization controller is used to sweep through the Poincaré sphere while measurements are taken on eight photodetectors by eight instruments. Rather than downloading 8x1,000 measurements, only 16 minimum/maximum results calculated by the instruments have to be transferred. This level of throughput improvement easily reduces the number of test stands needed on a production line.

There are different degrees of automation to consider for a production test system. Higher degrees of automation reduce operator interaction while improving throughput and accuracy. Typically, automation occurs along three different dimensions: component handling, test sequencing, and data flow. There are three levels of automation along these three dimensions, which have evolved from manual methods (see Figure on page 66).

The region nearest the origin in the Figure represents the automation level in a typical optoelectronics research lab. Here, a PC is used to sequence through the test by configuring each instrument for each test and collecting the resulting data one measurement at a time. This level of automation removes transcription errors and instrument-setup errors while improving the throughput by orders of magnitude. It is a good first step in gaining the benefits of automation.

The middle region in the Figure is the level of automation found in most optoelectronic production environments. While the test sequencing and data flow are well developed, the typical test platform offers little component handling. Production worthiness has a high priority in this environment. This level of automation is not found in labs, primarily due to the cost of developing these custom automation schemes.

The outer region of the Figure represents the leading edge of automation today. This level of automation places high-performance demands on measuring instruments. Systems with a high level of component-handling automation make it considerably more difficult to recover from an instrument failure or lockup. A fully automated robotic handling system may have tens of components at various stations at any given time. A single instrument lockup could require manual repositioning of all components in the system as part of the recovery process.

By testing optoelectronic components on the semiconductor wafer, process and yield problems can be found much sooner, thereby avoiding useless testing later on in the production process. Advances in optoelectronic device design and probing technologies are paving the way for more extensive wafer-plane testing and the tighter process control it provides.

Many devices can be tested on the wafer plane. Although photodetectors have always been suited to wafer-level testing, edge-emitting laser diodes must be processed to the point where they are cut into strips-also called bars-to expose their emitting edge for measurements. Vertical-cavity surface-emitting lasers (VCSELs) on the other hand, emit photons perpendicular to the wafer plane and are conducive to wafer-level testing without dicing. Some of the micro-electromechanical systems (MEMS) used for optical switching have also been designed for wafer-level testing.

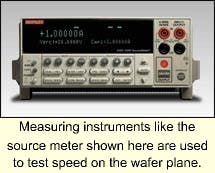

Avalanche photodiode (APD) and PIN detectors have been tested at the wafer level for years. Recently, their physical dimensions were reduced to minimize parasitic capacitance and improve bandwidth. These 10-Gbit/sec devices now require leading-edge probing technology as well as calibrated illumination for accurate testing. To optimize test speed on the wafer plane, an array of detectors must be uniformly illuminated with a suitable light source, and tens of devices are scanned and simultaneously characterized (see Photo). Accurate dark-current measurements also make it mandatory to conduct wafer-level tests in a light-tight environment that minimizes polarization losses.

There are two basic ways to collect light emitted from the wafer plane while testing VCSELs and certain MEMS switches: large-area detectors and integrating spheres. The integrating sphere provides the best measure of total optical radiated power and is relatively immune to PDL. Large-area detectors can also be designed to minimize PDL and provide a high-level signal for optical power similar to that of an integrating sphere.

Even the smallest integrating sphere suspended over a wafer will collect light from tens of VCSELs simultaneously. Therefore, only one VCSEL can be tested at a time. Parallel device tests require fiber-optic light-collection probes.

Wafer-plane optical-interface technology must be improved to allow expanded parallel testing of light-emitting devices. Today, VCSEL and MEMS manufacturers are working to develop this technology for their devices, which should also be suitable for the testing of planar waveguides. As the technology matures, many other devices will undoubtedly be tested at the wafer plane.

Currently, the basic fiber-optic detection probe for collecting or coupling light from the wafer plane is a gradient index (GRIN) lens bonded to a singlemode fiber and fitted with a photodetector. GRIN lenses are available in a range of formats, including single lenses, thermally formable lenses, and lens arrays. Given the high density of typical optoelectronic-component wafers such as APDs and VCSELs, a GRIN lens array offers the best hope for effective wafer-plane production testing.

In one proposed design, a column of GRIN lenses is mounted on the probe card that supports the electrical probes making contact with the wafer. When lowered into position, the electrical probes contact the VCSEL probe pads while the GRIN lenses couple the optical output to an array of detectors. In this way, parallel testing of many VCSELs can be achieved.

To perform functional testing on MEMS switches and planar waveguides, a light source must be coupled to the device's optical input. By using the same GRIN lens technology used in collecting light, it is possible to couple a semiconductor-laser module to an optical input with a fiber. Still, use of this technique requires control of backreflections, coupling efficiency, and polarization effects.

It is also important to limit self-heating of wafer devices during these tests. This isn't much of a problem with detector and switch testing, but VCSELs are more prone to self-heating damage. Cooled wafer chucks are one way to dissipate the heat, but localized thermal gradients may still induce mechanical stresses that degrade these devices. The best solution is to reduce power dissipation by shortening test times or by using a short-duty cycle of pulses during each test.

Although tests on the wafer plane require probing equipment, the measuring instruments attached to the prober should have the features and performance capabilities discussed here. The instrumentation-source-measurement units, switching matrixes, special power supplies-will include software that makes it easy to integrate the entire system.

Paul Meyer is a senior applications engineer in the component test group at Keithley Instruments Inc. (Cleveland). He can be reached at 440-498-2773 or by e-mail at [email protected].