Rapid service delivery with optical switching

Optical switches will manage traffic throughout the network and act as a gateway between network layers.

ERIC PROSSER,

Broadwing Communications

Strong demand for network capacity coupled with aggressive delivery intervals has been the driving force behind the evolution of network architecture to incorporate optical-switching technology. Service providers compete with buying power, available capital, internal processes, and highly skilled engineers at a frenetic pace to meet the challenge of matching demand with supply.

Recent technology advances offer service providers additional building blocks with which to construct their networks. Enabling technologies include Raman amplification for ultra-long-haul core networks, along with development of vertical-cavity surface-emitting lasers (VCSELs), micro-electromechanical systems (MEMS), and tunable-laser technologies for optical switches. Network architectures deployed today exploit the potential of optical switching to enable rapid delivery of services with customer-defined protection.

Optical switching is the cornerstone of the new network architecture. The promise of optical switches has fueled innovation in product design, manufacturing, and network deployment. The goal is to use the intelligence of the switch to rapidly turn up circuits with point-and-click provisioning and manage traffic around network bottlenecks and failures.

Carriers traditionally respond to demand by deploying significant levels of raw transport capacity (both wavelength count and channel size), but then terminate this capacity in fiber crossconnect panels at major network junctions. Optical circuits are then provisioned across the network through dedicated paths, which are connected and accessed across the crossconnect panels. Dedicated line terminating equipment is deployed to fill these paths in discrete SONET increments, while stranding the remaining bandwidth.

These networks are predominantly SONET rings or linear transport systems. The network is designed segment by segment, span by span, and high-speed circuits are equipped and provisioned across the network segment by segment and span by span. The resulting network complexity requires manual intervention at multiple network points, creating delivery intervals measured in weeks and months. As demand continues to increase, this "network on demand" approach will consume planning, engineering, provisioning, and operations resources at greater and greater rates.Ultimately, carriers compete on their ability to rapidly deliver services. The initial race among service providers was to obtain a national footprint with coast-to-coast fiber networks; the next race was to lay fiber to connect end users to these networks. The carriers that win will provide networks that come closest to real-time end-to-end provisioning.

Advances in optical switching continue the trend toward merging ATM, Interneet Protocol (IP), and transport-layer technologies. The backbone network becomes a "cloud" with simple ingress and egress points. Ultimately, customers will be able to buy high-speed capacity (up to 40 Gbits/sec), define quality of service, and dynamically reconfigure their circuits across a network with no intervention by the service provider.

With intelligent network-aware switches, new traffic can be routed efficiently around bottlenecks. Moreover, as network constraints are resolved, existing network resources can be optimized with automatic traffic grooming. As technology progresses, network engineers will be able to add a new DWDM system and allow the optical switch to optimize the network by grooming traffic to the new system. Additions to one layer or region of the network will automatically resolve constraints in other parts of the network.

As Multiprotocol Lambda Switching standards are developed, optical switches will integrate point-and-click provisioning, traffic-engineering functions, and restoration capabilities with label-switching routers. This integration will allow high-speed networks to be more responsive to traffic flow, ultimately resulting in instant provisioning of service by customers across a carrier's network, for example, providing an end-to-end 40-Gbit/sec connection for a customer in the same time that a telephony circuit is established today.

Equipment vendors typically segregate the optical and electrical switch fabrics into separate products, with different roles in the network. Beyond service-level granularity, the switching philosophy of these products progresses from STS-1 through wavelength, band, and fiber switching.

STS-1 switching will be required to aggregate and protect service domain traffic, classified as OC-12 (622 Gbits/sec) and below services. Wavelength and band switching will route the optical transport-rate traffic across the core. Fiber switching is generally thought of as a replacement for lightwave crossconnect panels, but it can be deployed to support switching of entire core DWDM systems.

Switches in each of these spaces are being deployed in networks today. Available products have different combinations of strengths and weaknesses. Each layer of network architecture has unique characteristics, so a product well suited for deployment on the edge of the network will most often be inefficient in the core. A best-of-breed optical strategy by service providers will require that switches deployed in each layer interact with standard protocols to efficiently route traffic across the network.

First-generation optical switches will replace the broadband crossconnect switch and become the gate node between regional and core networks. Carriers will build their networks around this architecture to take advantage of the ease of provisioning, restoration, traffic management, and the service-ready characteristics of these switches. The architectures will include dedicated bandwidth conduits between switches and STS-1 granular switching to efficiently aggregate traffic. Furthermore, the networks will be deployed to optimize the role of the core, regional, and metropolitan layers.

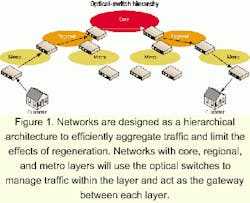

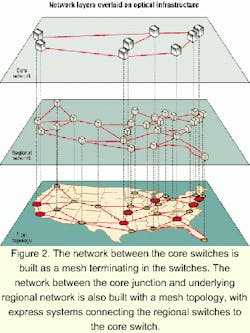

Providers will pre-position capacity from the edge points-of-presence to the optical switch and pre-position capacity between optical switches. The resulting bandwidth conduits will look and feel like trunks in a switch network. Customers will buy capacity into the switch as a conduit for on-demand services. Initially, the switches will route traffic over dedicated wavelength conduits. As technology advances, the switches will translate and route wavelengths over dedicated fiber conduits.Networks are designed as a hierarchical architecture to efficiently aggregate traffic and limit the effects of regeneration. Networks with core, regional, and metro layers will use the optical switches to manage traffic within the layer and act as the gateway between each layer (see Figure 1). The network between the core switches will be built as a mesh terminating in the switches. The network between the core junction and underlying regional network can be built with a mesh topology, with express systems connecting the regional switches to the core switch (see Figure 2).

These networks are distinctly different designs. Capacity in the metro layer is aggregated to feed capacity into the regional layer, which is aggregated to feed capacity into the core. The primary function of the core network is to carry capacity between core optical switches with a minimal level of regeneration, meaning that the core network is optimized for express links and built over ultra-long-haul transport equipment. The regional network must be flexible enough to service both intra-regional traffic (short paths) and inter-regional traffic (long paths). In contrast, the average distance of a circuit in the metro layer will be relatively short, and the role of regeneration is insignificant.

Core optical switches route wavelengths at backbone fiber junctions, keeping the traffic in the optical domain and eliminating unnecessary optical-electrical-optical conversions. The cost of these conversions can be measured by the additional latency, failure points, floor space, and hardware expense introduced. The closer the switch is to the core, the larger the role of the optical matrix.

While the demand for optical rate circuits for application platforms and private-line customers continues to increase, the primary demand across networks is still in the service domain at speeds of OC-12 and below. The closer the switch is to the edge of the network, the greater the role of the STS-1 granular switch fabric. The STS-1 granular switch fabric's primary role is to efficiently bundle service domain traffic across the optical domain in an effort to minimize the cost of stranded bandwidth.

A gating item to the widespread deployment of STS-1 granular switches is the available unprotected OC-48 matrix size (256x256 to 512x512). That represents a significant increase beyond the matrix afforded by a traditional digital-crossconnect switch. But to the extent that the core switch replaces other time-division multiplexing elements and switches optical domain traffic-OC-48s and above-this matrix will be quickly exhausted.

Optical switches will automatically reroute wavelengths around fiber cuts or equipment failures and enable customers to choose from a menu of protection classes. Carriers will build one network and offer different classes of service: unprotected, automatic protection switching, ring, or mesh. The services will be defined in the switch rather than by the transport system. Protection of traffic across transport networks is being pushed from fully redundant SONET rings toward distributed mesh networks.

Restoration strategies with first-generation optical switches are performed by switching line-rate circuits in the electrical matrix to a different wavelength route or switching wavelengths between fibers. As work on tunable lasers continues, carriers will have the flexibility of installing line-side transmitter ports in the switch capable of a spectrum of frequencies, rather than deploying a wavelength-dependent inventory of core capacity. Switches will be able to automatically reroute wavelengths between spans without contention, which will further mitigate the capital risk of over-investing in the protection network.

With optical switches bridging the network layers, incremental channels will be equipped only at the far edge of the network to establish a complete circuit. In the not-too-distant future, establishing a customer's 10-Gbit/sec circuit will involve simply equipping the interfaces at the customer's premises and allowing the optical switches to dynamically route the wavelengths across the network.

The optical network is evolving from a static, segmented architecture to a dynamic, bandwidth-on-demand, integrated infrastructure. Carrier-network management and provisioning systems must be redesigned to incorporate the new intelligence of the optical network. Circuit provisioners will need tools to design and document circuits across this new architecture, as the network itself makes increasingly sophisticated circuit-design decisions.

Today, core networks require all-optical switches that route traffic between core sites without electrical regeneration. The evolution of the network is to push the optical core toward the edge and ultimately directly to the customer; the goal is to push the all-optical architecture closer to the customer. The question is how long this migration from the core to edge will take. The answer dictates how long carriers will continue to deploy electrical switching in the core.

Eric Prosser is a senior manager of network transport planning at Broadwing Communications (Austin, TX).