A Snapshot of Today's Data Center Interconnect

Now that "DCI" has become a "thing," a well-known acronym with a wide range of connotations, "data center interconnect" has gone from being unknown and somewhat misunderstood to overused and overhyped. But what exactly the term DCI constitutes is changing all the time. What it meant last month might not be what it means in a few weeks' time. With that in mind, let's try to take a snapshot of this rapidly evolving beast. Let's attempt to pin down what DCI currently is and where it's headed.

Power Still Dominates

With rapidly advancing technology, one might be forgiven for thinking that the power situation in data centers is improving. Unfortunately, the growth in the cloud is outpacing advances in power efficiency, keeping power at the top of the list of concerns for data center operators (DCOs). And the industry has responded with a two-pronged attack on power: architectural efficiency and technology brevity.

Architectural efficiency: It sounds somewhat obvious to say, "Just get more from less," but it can actually be a very powerful concept. Reduce the number of network layers and the amount of processing needed to accomplish a task, and work-per-watt goes up. For example, while some have criticized the proliferation of Ethernet data rates, the truth is that these new intermediate rates are desperately needed. Having better granularity at exact multiples of each other greatly improves processing efficiency and dramatically lowers the overall power needed to perform identical amounts of switching and processing.

Throwing it all away: One of the most heavily debated data center mythologies is that everything gets discarded every 18 months. While admittedly there is some hyperbole to this, there is also a lot of truth. Data centers are not on a rapid update cycle just for bragging rights; simple math drives the process. If you have 50 MW available at a site and cloud growth forces you to double capacity, your options are to either double the size of the site (and hence power to 100 MW) or replace everything with new hardware that has twice the processing power at the same electrical power. The replaced hardware works its way down the value chain of processing jobs until it's obsoleted. Only then, by the way, is it destroyed and recycled.

The same logic is driving the push for higher-order modulation schemes in DCI. Each generation of coherent technology is dramatically more power efficient than the last. Just look at the rapid progression of 100-Gbps coherent technology from rack-sized behemoths to svelte CFP2 modules. Cloud growth and power demands are forcing DCOs to be on the bleeding edge of optical transport technology and will continue to do so for the foreseeable future.

Disaggregation — All the Rage

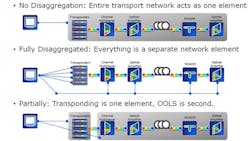

Optical transport systems are a complex mix of transponders, channel multiplexers, optical amplifiers, and more. These systems are traditionally centrally managed with a single controller, with each geographic location representing a single network node. In an attempt to make optical transport products better fit the DCI application, the industry has looked at disaggregating all of the optical transport functions into discretely managed network elements (see Figure 1). However, after several false starts and lab trials, it quickly became evident that fully disaggregating everything would overwhelm the orchestration layer.

Today, DCI disaggregation efforts appear to be converging toward dividing an optical transport network into two functional groups: transponding and optical line system (OLS). The transponding functions typically reside close to the switches, routers, and servers and depreciate on a similarly fast timeline, as short as the fabled 18 months. The optical amplifiers, multiplexers, and supervisory channel functions of the OLS are sometimes separately located from the transponders, closer to the fiber entry and exit of building. The OLS is also on a much slower technology cycle, with depreciations that can last decades, though 5-7 years is more common. Disaggregating the two seems to make a lot of sense.

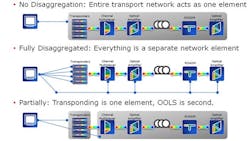

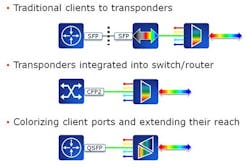

At the risk of oversimplifying DCI, it involves merely the interconnection of the routers/switches within data centers over distances longer than client optics can achieve and at spectral densities greater than a single grey client per fiber pair (see Figure 2). This is traditionally achieved by connecting said client with a WDM system that transponds, multiplexes, and amplifies that signal. However, at data rates of 100 Gbps and above, there is a performance/price gap that continues to grow as data rates climb.

The Definition of "Overkill"

The 100 Gbps and above club is currently served by coherent optics that can achieve distances over 4,000 km with over 256 signals per fiber pair. The best a 100G client can achieve is 40 km, with a signal count of one. The huge performance gap between the two is forcing DCOs to use coherent optics for any and all connectivity. Using 4,000-km transport gear to interconnect adjacent data center properties is a serious case of overkill (like hunting squirrels with a bazooka). And when a need arises, the market will respond. In this case, with direct-detect alternatives to coherent options.

Direct-detect transmission is not easy at 100-Gbps and higher data rates, as all the fiber impairments that are cancelled out in the digital signal processing chain in coherent detection rear their ugly heads again. But fiber impairments scale with distance in the fiber, so as long as links are kept short enough, the gain is worth the pain.

The most recent example of a DCI direct-detect approach is a PAM4 QSFP28 module that is good for 40-80 km and 40 signals per fiber pair. Besides the great capacity this solution offers over shorter distances, it has the additional benefit of being able to plug into switch/router client ports, instantly transforming them into the WDM terminal portion of a disaggregated architecture. And with all the excitement this new solution has generated, more vendors will soon be jumping into the fray.

Tapping into the Remaining Two-Thirds

While cloud traffic is still growing almost asymptotically, most of this traffic is machine-to-machine; the growth in the total number of internet users is actually slowing down. One-third of the planet is now online (depending upon your definition), and now the onus is on the industry to figure out a way to bring online the remaining two-thirds. For that to happen, costs have to drop dramatically. Not subscription costs – networking costs. Even if the internet were totally free (or at least freemium), the costs of delivering connectivity would be prohibitive. A new paradigm is needed in networking, and that paradigm is "openness."

There is a serious misperception of open systems in the optical industry. Openness is not about eroding margins or destroying the value-add of WDM system vendors. It's about tapping into the full manufacturing capacity of our industry.

The current model of vendor lock-in cannot possibly scale to the levels of connectivity needed to reach the remaining two-thirds of the global population. That's why the Telecom Infra Project (TIP) has been launched with the aim to bring together operators, infrastructure providers, system integrators, and other technology companies. The TIP community hopes to reimagine the traditional approach to building and deploying network infrastructure. Only by working together as an industry can we hope to bring the benefits of the digital economy to all.

A Vision of an Open DCI Future

Fiber is forever — an asset planted in the ground with the unique property that its value increases as technology advances. Open optical line systems mated to that fiber create a communication highway that anyone can jump onto and ride. Open terminal systems from any and all vendors are like vehicles riding the highway – varied. And it's that variety, from a wide supply base, that will drive optical communications' continuing growth.

Jim Theodoras is vice president of global business development at ADVA Optical Networking.