Testing Broadband Service Speeds

Interest in high-speed broadband services, specifically 1 Gbps and beyond, continues to rise at a very fast pace. A wide variety of traditional telecom and cable TV service providers, as well as smaller and local internet service providers (ISPs), now offer these services, each with different technologies and deployment strategies. Example access technologies include point-to-point Ethernet, FTTx plus copper, GPON, and DOCSIS 3.1; in some markets, 10-Gbps broadband services are being delivered using 10G-EPON, XGS-PON, or NG-PON2.

Regardless of the technology used and deployment strategy, all service providers face the challenge of proving that their broadband service is indeed being delivered to their customers as promised. To meet this challenge, service providers need reliable and repeatable test methodologies and devices to properly support their service efforts.

Service Assurance

The technologies that enable broadband services at gigabit speeds are great for consumers and for competition among service providers. Supporting these technologies from an operational point of view is key to their success. Consumers can be very proactive when contracting their new services. Upon service delivery, they can carry out their own service “tests” using networking devices such as tablets, smartphones, and laptops. Software applications and websites that measure broadband service speeds, such as Speedtest® by Ookla®, the industry’s de-facto standard technology to measure internet connection speeds, are easily accessible.

In some cases, a problem occurs when the consumer tests their service with an older laptop that has a slower CPU and is unable to achieve 1 Gbps over its copper RJ-45 10/100/1000Baser-T interface. Once they realize that their laptop is only measuring 200-300 Mbps out of a 1-Gbps service, they can become frustrated and may file an unwarranted complaint with their service provider. This complaint in turn will generate a ticket that could turn into a truck roll, an unnecessary operational expense for the service provider.

The above scenario happens more often than you might think. It can be aggravated and become a nightmare for service providers when the service goes beyond 1 Gbps, as there is really no consumer laptop available today with a 10-Gbps interface. For example, the consumer can pay for a 2-Gbps DOCSIS 3.1 service but measure only 1 Gbps with their top-of-the-line video gaming laptop.

For this reason, service providers are starting to go beyond the traditional RFC2544 and ITU.T Y.1564 testing, which are test suites mainly developed for testing at Layer 2 and Layer 3 services, during service turnup. They are adding test methodologies for stateful TCP testing, like RFC6349, and simple download and upload testing to FTP and HTTP servers. All of these methods are TCP-based and help test from a quality of experience (QoE) point of view, which is what the consumer cares about at the end of the day.

Testing Strategy

QoE test strategies vary by service provider, as some prefer a RFC6349 approach, while others prefer a Speedtest type approach. It all depends on the network resources available. Service providers that already have Ookla servers deployed within their footprint would generally opt for the Speedtest by Ookla method, especially if their servers can handle gigabit speeds. Certain not-so-technical subscribers may also appreciate the simplicity of Speedtest results.

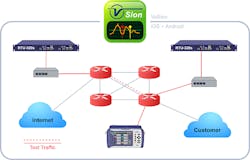

The RFC6349 approach has been preferred by service providers delivering Carrier Ethernet services. Similar to the Speedtest by Ookla method, where servers and field portable test sets are required, the RFC6349 approach will require the deployment of dedicated TCP servers within the service provider footprint or dedicated rackmount test equipment that can operate as both a TCP server and TCP client, in addition to carrying out other traditional Ethernet test methodologies like ITU-T Y.1564 and RFC2544 (see Figure 1).

Figure 1. Example of a service provider network with deployed test heads.

Dedicated Test Tools

Since it is the responsibility of service providers to present undeniable proof to their customers that each service meets the service level agreement (SLA) at turnup, they require reliable verification tools. Also, as tablets, smartphones, and low-performing laptops do not always provide reliable results, especially when testing 1-Gbps and beyond services, field service technicians and engineers require dedicated test tools to successfully deliver an accurate proof-of-service speed for their customers.

Dedicated test tools are designed for specific tasks. Hardware/logic, CPU, and RAM resources are all used exclusively for testing a specific application: RFC6349 (stateful TCP), Speedtest by Ookla, FTP upload/download, ITU-T Y.1564, etc. These dedicated hardware and software resources help provide repeatability and reliability in the testing methodology and procedures. This is important during the installation and delivery of new services. In addition, these tools provide the required physical interfaces to test the services: 10/100/1000Base-T, 1000Base-X, 10GBase-X, and DOCSIS 3.0 and 3.1.

Comparison tests conducted in the field with a dedicated test tool and software-based clients yielded interesting results that help make the case for dedicated hardware-based instruments for Layer 4-7 testing. Similar tests were performed in a controlled environment in the lab. The test results are presented below.

The devices under test were the following: hardware-based dedicated test set, laptop 1, and laptop 2. Note: Laptop 1 and laptop 2 had the same RAM and CPU speeds, but different operating systems. All three devices had a 1-Gbps port.

The test setup: One high-performance server running a TCP server and HTTP server applications. Each device under test connected directly to the server during testing. One additional test was carried out in the case of the TCP Throughput test between two dedicated test sets (see Figure 2).

Figure 2. Comparison test setup.

The simple tests carried out in the lab show that dedicated test tools that perform TCP-based applications performance testing can produce consistent and reliable results when the implementation is completed on hardware (FPGAs), compared to alternatives that depend on CPU speed, RAM, and operating system performance. A step further into the testing introduced network impairment equipment in-line with the device under test and the server. The network impairment equipment can introduce impairments such as packet delay, jitter, packet loss, etc. TCP-based applications are dependent on several factors such as window size, round trip time (delay), and buffer size.

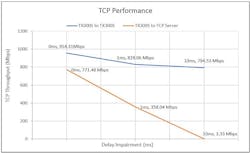

When a 1-msec delay was introduced, the test set’s TCP and HTTP throughput dropped by less than 10%. However, the TCP and HTPP throughput performance of the laptops dropped by more than 50%. The performance of the TCP server also dropped significantly. This substantial difference in performance shows that the hardware/FPGA-based approach that is not dependent on CPU speed, RAM, or operating system, is more resilient and reliable in broadband service testing of 1 Gbps and beyond (see Figure 3).

Figure 3. TCP Throughput Performance vs. Delay (0 ms, 1 ms, 10 ms)

Based on the results conducted in the lab and similar results discovered in the field, you can clearly see the need for dedicated test tools for TCP-based applications when testing 1-Gbps broadband services and beyond. Most laptops and tablets with software-based clients can only reach a certain level of reliable throughput performance. None of them can reliably verify SLAs for broadband services at or beyond 1 Gbps as well as dedicated hardware/FPGA-based instruments do. Service providers need to take this into account as they enter the gigabit services market.

Ricardo Torres is director of product marketing and a founding member of VeEX. He leads the product marketing, strategic positioning, and product management of VeEX’s Ethernet portfolio. He is also responsible for the company’s global business initiatives encompassing Carrier Ethernet/IP networks, mobile backhaul, 40GbE/100GbE, and Ethernet synchronization technologies. Prior to joining VeEX, Ricardo worked at Sunrise Telecom, where he was responsible for managing the Ethernet/IP portfolio. He has also worked at Agilent Technologies in the area of high-speed fiber-optic networking.