Four challenges to carrier-grade network functions virtualization

Network functions virtualization (NFV) represents a core structural change in telecommunication infrastructure deployment. This in turn means significant changes in the delivery of applications to service providers. NFV will bring cost efficiencies, time-to-market improvements, and innovation to the telecommunication industry.

Specifically, NFV promises four important benefits:

- Flexibility: Service providers looking to quickly deploy new services require a much more flexible and adaptable network -- one that can be easily and quickly installed and provisioned.

- Cost: Cost is a top consideration for any operator or service provider these days, even more so now that they see Google and others deploying massive data centers using off-the-shelf merchant silicon (commoditized hardware) as a way to drive down cost. Cost is also reflected in opex -- how easy it is to deploy and maintain services in the network.

- Scalability: To adapt quickly to users' changing needs and provide new services, operators must be able to scale their network architecture across multiple servers, rather than being limited by what a single box can do.

- Security: Security has been, and continues to be, a major challenge in networking. Operators want to be able to provision and manage the network while allowing their customers to run their own virtual space and firewall securely within the network.

These benefits will be enabled only if the industry’s traditional approach to applications delivery changes from a closed, proprietary, and tightly integrated stack model into an open, layered model, where applications are hosted on a shared, common infrastructure base. Telecom vendors are currently developing proofs-of-concept for moving existing network functions to virtualized infrastructure to aid this transition.

Many aspects come into play when the different network functions are deployed in virtualized infrastructure. Just as there are four main benefits to NFV, there are four significant challenges operators can expect to encounter during the implementation and deployment phase.

1. Meeting carrier-grade availability requirements

Present day carrier network infrastructure provides reliable service and meets the availability requirement of five 9s. Existing high-capacity servers are designed for IT services and enterprise class of application, with availability on the order of two or three 9s. NFV infrastructure based on standard off-the-shelf servers will not be able to meet carrier-grade availability expectations.

In the traditional high-availability architecture, while platform redundancy is used to avoid a single point of failure, all the hardware and software components are hardened to prevent failures. Application-specific state replication is implemented to ensure continuity of operations.

NFV architecture enables resolving the high availability challenge by an alternative technological approach. Agile life cycle management allows just-in-time creation of virtual machines (VMs) to host the virtual network functions (VNFs) in the event of software or hardware failure. The NFV availability approach relies on spawning new instances on existing or additional servers. This is quite different than the traditional high-availability architecture used in telecom systems but nevertheless provides a robust and effective solution for the carrier-grade availability gap.

2. Limitations of existing network applications

Most legacy systems are designed with an assumption that the network application has exclusive access to the hardware resources (CPU, NIC, disk). Resources such as BSP and hardware accelerators are directly controlled by the application.

Examples of possible issues:

- Internal task interaction of real-time applications assumes direct control of hardware resources. Sharing of the hardware platform with other applications would cause some performance degradation.

- Existing network applications would mostly be scalable; however the architecture may not support dynamic scaling in response to traffic changes. The load distribution algorithm would typically be static and assume all the installed physical servers are available for use, resulting in under-utilization of each of the servers.

To resolve this gap, NFV systems should implement improvements in the virtualization layer itself and in the applications running on top of it:

- The virtualization hypervisor should provide a good level of isolation between different virtual machines and perform smart load balancing of hardware resources between the applications running on top of the virtualization layer.

- Applications should be re-engineered to allow dynamic use of the virtual infrastructure to reach full utilization of hardware resources.

3. Management of virtualized infrastructure and network applications

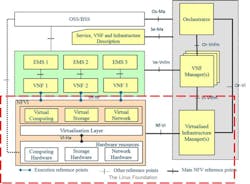

The management system of traditional networks typically consists of multiple element managers that report to a network management system (NMS). Each network element is associated with at least one element manager. Existing element managers assume tight coupling between the network element and the platform itself.

Existing management systems consider the operational state of the underlying platform the same as that of the network function. The topological view shows different network elements and network functions in their physical form in the form of hierarchy of hardware modules, rather than showing the network functions as being overlaid over the physical infrastructure

To resolve this gap, NFV management systems should enable provisioning and monitoring of the physical platform and the virtual machines running on top of the virtualization layer. The topology view of network elements should be focused on presenting logical network functions rather physical hardware status.

4. Integration and testing challenges

Traditionally different network applications were deployed as distinctly visible entities with well-defined interface reference points. Integration tools such as protocol analyzers and data probes are based on such controllable and observable reference points. Most of the integration tools assume easy access to these interface reference points.

However, in the NFV model one or more network functions related to an end-to-end service may be assigned to some high-end hardware resource and the access to the reference point would be restricted.

Another challenge is the system performance and stability testing in the lab. It is impossible to create an environment equivalent to the actual deployment, as other applications sharing the same physical hardware are either unknown or cannot be instantiated.

To resolve this gap, it is possible to configure the virtual switch in the virtualization layer to forward all the traffic to the physical NIC to facilitate the tapping of the interface reference point. In addition, to resolve the system performance challenge, test engineers should develop new test techniques to emulate the impact of third-party applications running in parallel to the vendor application.

Summary

In the last few years network virtualization has captured the attention of carriers worldwide. NFV has been making waves as it represents the evolution of networking, promising to virtualize physical network infrastructure and create an environment that’s more adaptable than the legacy systems currently in use. Despite all challenges and issues, NFV is here to stay.

Avi Dorfman is vice president, R&D at Telco Systems. He has more than 20 years of experience in the development and delivery of telecommunications systems. Avi was the vice president, R&D and CTO for ASIS, which develops ATCA systems; vice president, R&D of Zlango, a startup that developed an innovative mobile messaging service; and vice president, R&D of Radvision/Avaya Technology Business Unit, specializing in voice and video over IP technologies. Prior to these roles, Avi worked for many years as the VoIP project development director in Comverse and system architect in several organizations.