High-tech video testing methods come of age

Today’s traditional telecommunications providers are in a race to provide multiple services to business and residential customers. One of the keys to delivering quality voice, data, and video services is the ability to test every layer of the new network. This requirement is important not only during deployment, but also once the network is turned up; providers need to monitor or proactively test the network on a routine basis to ensure problems are identified and dealt with before customers begin to complain.

Arguably the most difficult and complex part of the triple-play service bundle to provide and monitor is video. Although service providers may be very familiar with the overall notion of network testing, everything becomes much more complicated when migrating to high-performance services such as high-definition television (HDTV) and video on demand (VoD).

The bandwidth affordability offered by fiber, DWDM, and Gigabit Ethernet (GbE) has not been lost on the cable TV providers. In fact, they are welcoming optical architectures as a means to deliver quality VoD services at lower cost. The idea is to minimize or eliminate the regional servers while obtaining the bandwidth required to transport video quickly to the edge of their cable network and out to the end users. But sending MPEG video streams over optical fiber and GbE introduces a whole new set of potential problems to both cable TV and telephone-company providers.

Current test methods can ensure that IP packets get to their destination at the right time, but in the world of VoD, for instance, technicians must also be concerned with exactly what contents are included in each packet-and that requires testing at the MPEG level. As video data traverses through and is manipulated by the many layers and paths of the network, only proper testing at all levels ensures the video service will perform as advertised at its destination.

The typical network used to deliver high-end video services involves a multiple-layer architecture. Fiber is at the lowest layer, with DWDM technology dividing the network into individual wavelengths. Each wavelength or channel uses GbE as a transport protocol. Figure 1 shows a typical GbE transmission layout for VoD from the headend to the hubs. The Ethernet layer is further broken down into IP streams and again divided into user datagram protocol (UDP) ports. Finally, each UDP port contains an MPEG-2 transport stream, typically with up to 16 MPEG programs. If a problem occurs with video and MPEG, any lower layer could be suspect-therefore, service providers have the complex task of managing a very complex multilayer network against any number of potential problems in any number of network layers.Most phone companies and cable TV operators are familiar with testing the physical layer of the optical network using an optical time-domain reflectometer (OTDR), among other equipment, to check the fiber and dispersion. They’re also familiar with using an optical spectrum analyzer (OSA) to check DWDM signals as well as specialized equipment for looking into the GbE layer or IP layer-even for viewing the UDP statistical data. But there is one more piece of the testing puzzle necessary for a complete solution: the ability to look directly at individual MPEG streams.

Truly testing the video transport system involves getting inside the GbE frame and checking the MPEG contents. A complete video testing approach tests the fiber for issues associated with loss and dispersion, the GbE layer for performance parameters such as throughput and latency, and the actual video content to ensure it arrives on time and uncorrupted.

For example, one of the more important measurements necessary within the MPEG layer is program clock reference (PCR) timing. The problem is known as PCR jitter. Since many technicians supporting the new video networks are more in tune with SONET than MPEG characteristics, PCR jitter might be confusing. SONET jitter typically refers to a clock variation. PCR jitter deals specifically with MPEG and refers to the variation in the inter-arrival time of the packets. The MPEG PCR jitter problem is exacerbated with GbE because delivery over IP is essentially unconstrained in timing.

Unlike an obvious synchronization problem between video and audio that is easily identified, many video problems are inherently much more subtle. Moving to a digital world, it’s no longer a matter of simply looking at the video on a screen and seeing a problem occurring. With MPEG and digital TV, it’s often impossible to tell if the system is working properly without performing tests. Therefore, it’s important to incorporate tests that can constantly monitor MPEG video to identify problems at their earliest stages.

Although MPEG timing problems are easy to identify with an MPEG analyzer, they are invisible to anything else. That makes it critical for providers to monitor or routinely test this layer, particularly since the problem could originate at almost any other point in the network where testing may not be performed unless a problem is actually detected.

Testing the video portion of the triple-play network is unique because video has very different characteristics within the network. In pure data networks, for example, technicians don’t have to be overly concerned with latency or how consistently the data packets arrive-just that they arrive and are eventually processed. For voice and video, some frame loss is tolerable, but the actual receive rate of the packets becomes much more critical for video services. They must arrive at a constant rate. If the packets come in very sporadically, very large buffers would be required in the receivers to correct any variation and assist in streaming the packets out at a very constant rate. MPEG was designed to be unidirectional, and therefore a constant receive rate is crucial to achieve the highest-quality video with economical buffers in the receiving equipment.

There are modular test units available today for testing the entire network, from fiber to GbE. If the technician needs to test the fiber itself, an OTDR module is plugged into the unit. For testing DWDM signals, an OSA module can be applied. Dispersion modules are available to test the network for dispersion problems. GbE modules can test for frame loss, latency, or correct throughput.

With this new requirement for testing MPEG video, the GbE test sets being employed for these networks should also evolve to include MPEG testing. That will enable technicians to not only test the GbE frames from a particular switch or port for frame errors, but also allow them to filter out specific MPEG streams and feed them to the MPEG test software for analysis.

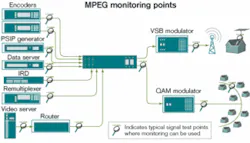

Technicians can now quickly identify MPEG problems even before they become visible on the video screen. Using standard test methodology at various testing locations (see Figure 2), they can then move back through the network to locate the source of the problem at any layer of the network to check any number of causes: attenuation, dispersion, laser drift in a particular wavelength, nonlinearity, and a host of other possible culprits. Monitoring points throughout the headend signal path will enable MPEG monitoring to track down the source of any video problem.Aaron Deragonis director of product management at NetTest (Utica, NY-www.nettest.com).Rich Chernockis a senior member of the technical staff at Triveni Digital (Princeton Juncti on, NJ-www.trivenidigital.com). The authors would like to acknowledge three contributors to this article: Larry Crossett of NetTest and Rich Kaye and Dinkar Bhat of Triveni.