Building a data-center network with optimal performance and economy

East-west flows are dominating data-center traffic, but optical circuit switching with new controller technology can deliver significant improvements in performance.

By DANIEL TARDENT and NATHAN FARRINGTON

Modern data centers are the new network battlefield, where exploding traffic demands are pushing conventional networking technologies to their absolute performance limits while cost constraints continue to escalate. Nowhere is this more true than in hyper-scale data centers; here, mobile data, video, and social media applications are driving the construction of facilities up to a million square feet or more with vast power consumption and cooling demands.

In this emerging new world of data powerhouses, network designers strive to link servers and storage via an optimum mix of low cost, low latency, high performance, and energy-efficient network technology. Research and development within industry and academia to achieve these goals has sparked several significant advancements. These developments include software-defined networking (SDN), network functions virtualization (NFV), the availability of low cost merchant-silicon-based networking hardware coupled with open source software stacks, and new system architectures such as the Open Compute Project and Intel's Rackscale.

Alongside these advances, a new hybrid packet-optical data-center network architecture has emerged with the potential to dramatically reduce capital and operational expenditures while enabling data-center networks to scale to support their growing bandwidth demands.

Recent data-center trends show bandwidth requirements are growing 25%-35% per year, a rate predicted to continue years into the future. Cloud data centers sit at the high end of this growth curve with many already evolving from 10 Gbps to 40 Gbps in their aggregation networks. Gartner projects sales revenue for 40 Gigabit Ethernet switch ports to grow 75% per year through 2017, with port shipments expected to increase 107% during the same period.

But more interesting than the rate of bandwidth growth are the location and nature of this traffic. It turns out that 75% or more of the traffic is within the data center, meaning east-west traffic between racks. This situation derives from the functional separation of application servers, storage, and databases, which generates replication, backup, and read/write traffic traversing the data center. Other major contributing factors to east-west traffic are server virtualization and parallel processing in applications like Hadoop.

A problem to solve

Rapid growth in internal data-center traffic brings with it a serious set of challenges, not the least of which is the cost to deploy large amounts of additional capacity in the aggregation networks that connect top-of-rack switches (ToRs). This challenge becomes particularly acute as interface rates scale to 40 Gbps.

A second challenge is that modern cloud-scale data centers must support a variety of traffic patterns, including traditional short-lived "mouse" flows and more persistent "elephant" flows. Mouse flows are typically associated with bursty, latency-sensitive applications; elephant flows include traffic from virtual-machine migrations, backup and data migrations, and Big Data applications such as MapReduce/Hadoop. Typically, the majority of flows within a data center are mouse flows, but the majority of data belong to a few elephant flows.

Highly persistent TCP flows tend to fill network buffers end-to-end, which adds significant queuing delay to the mouse flows that share these buffers. In a network of elephant and mouse flows, that means the more latency-sensitive mouse flows suffer from increased latency due to the collateral damage caused by the elephant flows. Such elasticity in the bandwidth demands requires both network and application level control so relative priorities and traffic flows (both short-lived and persistent) can be handled and routed appropriately within the network to avoid expensive over-provisioning.

Hybrid packet-optical circuit networks provide answers

These challenges were foreseen by researchers more than 10 years ago and inspired a number of significant research efforts in both industry and academia. In 2010, two groundbreaking papers were presented at the SIGCOMM conference in New Delhi.

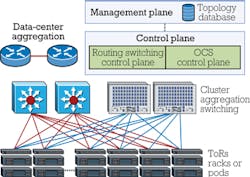

The "Helios" and "C-Through" papers each described a hybrid approach to data-center networking in which 3D MEMS optical circuit switches augmented conventional Layer 2/3 switches to dramatically scale capacity and lower cost. In such networks (see Figure 1), traffic flows that are both high capacity and highly persistent are recognized and flagged. Then they are rerouted and offloaded from the packet network to the optical circuit-switched (OCS) network. Thus, both elephant and mouse flows can be handled efficiently with a low cost network approach.

A secondary but still significant benefit of the hybrid-network approach is that the power consumption of the OCS component is two orders of magnitude lower than the equivalent amount of packet switching capacity. The work from Helios and C-Through was recognized immediately by at least one very large cloud data-center operator, who then designed and implemented a variation of the hybrid concept in their hyper-scale facilities.

Managing, controlling the network

Combining packet- and circuit-based networks means there are now two independent switch fabrics. There has to be a way to move flows of data between the networks based on overall data-center traffic patterns and the specific demands of some applications.

For the hybrid network to function, a management and control plane is needed to monitor the data-center traffic matrix and the specific flow requirements and priorities of applications. Together with a historical view of traffic patterns, the management and control plane opens and closes connections between ToRs through the optical circuit switches and updates the routing tables in the packet-network devices to provide an optimal best-fit network in real time.

In this way, highly persistent elephant flows, when detected, can be offloaded from the packet network to the OCS network. This action facilitates faster delivery of the flow while freeing the packet network to focus on handling the short-lived flows it services most effectively.

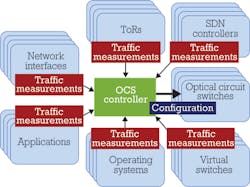

The Helios research project at UC San Diego tackled this problem and developed a prototype controller. More recently, industry has focused on implementing a commercially deployable controller for production networks. The hybrid control plane (see Figure 2) constantly monitors and analyzes system-wide network traffic from ToRs and virtual switches. When combined with historical analytics or scheduled events, it maintains an accurate view of bandwidth demand versus available link capacities and algorithmically computes the optimal circuit topology configuration to serve the needs of the network at any given time. Algorithms ensure that high bandwidth, highly persistent traffic is served by circuit-switched OCS links, while bursty and lower demand traffic is served by packet-switched links.

As the demand changes throughout the network, new optimal circuit topology configurations are identified and orchestrated over and over again via the respective packet- and circuit-switch managers that coordinate with their switches via southbound APIs (OpenFlow, TL1, or any other vendor-specific interface). To minimize the Layer 2/3 network convergence times during topology changes, the hybrid packet OCS controller ensures that interface-change events are flooded throughout the network before and after any new topology change at the OCS layer.

Economic benefits

Recent analysis shows that OCS connectivity offers far lower capex than the most recent Layer 2/3 packet platforms of leading vendors. Based on a singlemode optical-network deployment and assuming low cost commodity optical transceivers in the Layer 2/3 switches, the price per port of a fully provisioned optical circuit switch is more than 3 times lower at 10 Gbps and 10 times lower at 40 Gbps than a fully equipped packet-based switch.

Capex reduction, however, is just the tip of the iceberg. Further analysis reveals that opex reduction delivers yet another layer of cost savings. As noted earlier, energy efficiency is a major focus for large data centers. A 320-port 3D MEMS optical circuit switch requires a power consumption of about 45 W total, resulting in 150 times lower power consumption per port than a Layer 2/3 switch at 40 Gbps.

Potentially even more important than power consumption, however, is scalability. Because of its inherently passive nature, a 3D MEMS optical circuit switch can scale to support bit rates of 100 Gbps, 400 Gbps, or more as needed without any upgrade. By comparison, a typical packet-based fabric will require at least transceiver and linecard upgrades to support higher bit rates and possibly a complete forklift upgrade if the backplane doesn't support the added bandwidth. That could translate into a major network upgrade cost every three years. For the OCS network, no upgrades are required in the aggregation layer.

Move your elephant to save your mouse

The incidence of highly persistent traffic flows is on the increase in data centers. These elephants demand very high capacity networks and can negatively affect the delivery of small but equally important mouse data flows. Solving this problem in a cost-effective way is a key goal of many large data-center operators.

The hybrid packet-optical network provides flexible bandwidth on demand to support the capacity elasticity needed in modern data centers. This approach is becoming easier to implement with the availability of improved controller technology that can be easily deployed by almost any large data center.

DANIEL TARDENT is the vice president of marketing & PLM at CALIENT Technologies Inc., where he heads all product marketing and management for the company's 3D MEMS Optical Circuit Switching Systems. NATHAN FARRINGTON is the founder and CEO of Packetcounter Inc., a computer-network software and services company. Previously, he was a data-center-network engineer at Facebook.

Archived Lightwave Issues