Network Processing Engines find applications in edge, core, and metro

By ZHENG WANG

Teradiant Networks Inc.

Technologies for packet processing have evolved significantly over the last decade. In the early days, routers were based mostly on general-purpose central processing units (CPUs). When Ethernet became popular in enterprise networks, custom application specific integrated circuits (ASICs) were developed for switches to support rapidly increasing speeds. The advent of the Internet in the mid-1990s put data networking into high gear, and the demand for networking equipment soared. A number of semiconductor startups began developing programmable network processor units (NPUs), specifically designed for packet processing.

NPUs are customized programmable devices for performing specific packet-forwarding functions. Typical NPUs consist of programmable processor cores and associated memory. Because a single processor is often unable to provide the necessary processing power, tens and even hundreds of processor cores are used to perform multiple individual tasks either in parallel or in pipeline stages or a combination of the two.

Programmable NPUs have made inroads into the access equipment market. Access systems have to support a wide range of access technologies and protocols such as firewall, switching, routing, tunneling, and deep packet inspection. The speed requirement, on the other hand, is relatively low, typically below 2 Gbits/sec. At such speeds, many programmable NPUs are able to cope with the processing needs and offer flexibility for different requirements.

New architecture maximizes performance, minimizes deployment time

For edge, metro, and core applications, however, where line cards typically operate at 10-40 Gbits/sec, current programmable NPUs lack the processing power needed to provide deterministic (guaranteed wire speed) performance. In addition, software development for programmable NPUs can be very complex. Most programmable NPUs have their own programming environments, including language, compiler, and debugger. Developing software for tens--or hundreds--of programmable processors in an NPU is not trivial and adds substantial complexity and cost to product development.

The network processing engine (NPE) is a new semiconductor architecture for packet processing. In an NPE, the standard networking operations are hard-wired, and an extensive set of configurable options is available for OEMs to customize the NPE for a wide variety of systems. These configurable options entail selecting values for a number of registers on the NPE, thereby eliminating microcoding altogether. The key advantages of this architecture are deterministic performance, scalability, and simplicity.

The configurable architecture of the NPE is meant to create a "best of both worlds" proposition by combining the performance of the ASIC design approach with the flexibility of the NPU approach--thereby targeting the dual requirements for guaranteed high bandwidth and minimal time-to-deployment that are common in virtually all network environments.

Selecting networking processing silicon

Packet processing can be divided into two main functional blocks: the packet processing engine and the traffic manager. The packet processing engine is responsible for protocol-specific processing such as header manipulation, packet classification, route lookup, and packet marking. The traffic manager performs buffer management and packet scheduling.

To develop next-generation edge and core systems with higher performance and lower cost, designers of network processing silicon must meet a number of requirements:

Higher speed and higher density

Internet traffic continues to grow at a rate close to 100% annually. Next-generation networking silicon must continue its rapid improvement in performance and density in order to sustain such traffic growth. The improvement has to be achieved through better density and consolidation of functions, so that the total cost of the systems can continue to decrease.

Deterministic performance with full QoS features

Traditional telecomm services such as telephony and private leased lines are built with TDM/SONET systems that offer very high levels of quality assurance. To replace these services with Internet-based offerings, OEMs must build routers and switches with comparable performance. Such performance must be maintained with full quality-of-service (QoS) features enabled.

Scalable solutions for edge and core

Next-generation networking silicon needs to be scalable so it can be used for multiple offerings--for both edge and core products, for example. When the same silicon is used for multiple products, consistent features across a product family and similar look and feel in configuration can be maintained, which will lead to the simplification of management tasks and substantially reduce the total cost of development.

Rapid development and integration

Developers of networking silicon should use standard interfaces as much as possible so best-in-class components can work together without complex glue logic. Silicon vendors must also make the programming and configuration of the silicon as simple as possible to optimize the time spent on integration and the total cost of bringing a product to market.

Multi-service, multi-protocol support

The transition to Internet Protocol (IP)/Multi-protocol label switching (MPLS)-based networks will be a gradual process that may take many years. Until that time, different protocols, such as ATM and frame relay (FR), will co-exist. In addition, momentum for IPv6 will continue to grow, particularly in Europe and Asia Pacific. Today's networking silicon therefore must support IPv4 and MPLS as well as ATM, FR, IPv6, and protocols such as Layer 2 over MPLS that help this transition.

Applications for NPEs

One example of an NPE uses separate chips for packet processing and traffic management. The two chips come with three different densities: 10 Gbits/sec, 20 Gbits/sec, and 40 Gbits/sec, which support one 10-Gbit/sec port, two 10-Gbit/sec ports, or four 10-Gbit/sec ports, respectively.

In contrast with the design of conventional network processors, NPEs use a configurable approach to strike a fine balance between programmability and performance. NPEs should also support all the standard networking protocols found in today's edge and core networks.

A configurable architecture can allow optimized implementation for individual features and achieve an aggregated data rate of 40 Gbits/sec and a forwarding rate of 128 million packets per second--all with full functionality.

NPE in a core router

Speed, density and performance are the key requirements for core routers and switches. To further increase the density of systems, next-generation core routers have to support line cards with speeds of 10-40 Gbits/sec and forwarding rates of 64-128 million packets per second.

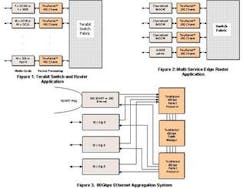

Figure 1 shows the block diagram of a core router based on an NPE. Each of the line cards in the system is built with the 4x10-Gbit/sec chipset and is capable of wirespeed packet processing and traffic management at the aggregated rate of 40 Gbits/sec. The chipset supports 256 physical ports or channels. The line cards, therefore, can be configured as 4 x OC-192/10 Gigabit Ethernet (GbE); 16 x OC-48; 64 x OC-12; or 40 x 1 GbE. This design will allow OEMs to build highly compact core systems with unprecedented density and low per-port cost.

The traffic manager may need to handle a large number of virtual output queues for large systems built with a cluster of smaller systems and an external switch fabric. In this application, the chipset can support such clusters as large as 40 Tbits/sec.

NPE in a multi-service edge switch

Although public networks are converging towards IP/MPLS, there is a tremendous amount of legacy equipment deployed in existing networks that is generating the bulk of the revenues today. Multi-service switches must support legacy protocols and their transition towards IP/MPLS. As edge systems, multi-service switches also need to provide tunneling functions, flexible classification, and bandwidth management on a per-customer basis.

Figure 2 depicts a block diagram for a multi-service switch based on a 20-Gbit/sec chipset. The system includes four 20-Gbit/sec line cards interconnected by a 160-Gbit/sec switch fabric. Each of the three access line cards has eight channelized OC-48 ports, and the uplink line card has either two OC-192 ports or two 10-GbE ports. Such a configuration supports 3:1 over-subscription between the access ports and uplink ports.

To support legacy equipment, the chipset implements a range of network protocols, including IPv4, IPv6, MPLS, ATM, and FR. In addition, the chipset supports the latest Martini draft for transporting Ethernet, VLAN, ATM, and FR over a unified MPLS network. Any of the protocols can be configured dynamically to any of the ports or channels. The chipset also supports the deep packet classification, differentiated services policing, and per-customer queuing that are essential for edge applications.

NPE in an Ethernet aggregation system

Ethernet aggregation is an important application for metro networks in providing LAN transport services for enterprises. The systems built for this market segment must be low-cost, high-density, and high-performance.

Figure 3 illustrates a metro Ethernet aggregation system that uses two 40-Gbit/sec simplex packet processor chips and one 40-Gbit/sec simplex traffic manager chip operating in the switch mode to handle 40 Gbits/sec in and out. Therefore, it is possible to build an 80-Gbit/sec aggregation system without requiring a switch fabric.

The system aggregates 30 GbE ports into a 10-Gbit/sec uplink, and it provides full Layer-3 IP/MPLS routing and switching capabilities. On the access side, 30 GbE ports feed into three SPI-4 interfaces. On the trunk side, either a 10-GbE port or an OC-192 link is integrated with a SONET add/drop multiplexer (ADM) ring.

The system can be built using a traffic manager chip that provides loopback from ingress to egress. Because this design eliminates the switch fabric chipset and its associated interface chips, the system can be built in a more compact form and at a lower cost. The policing, shaping, and queuing capabilities enable unique bandwidth management features such as bandwidth-on-demand, fractional GbE, and differentiated services.

Zheng Wang is a principal architect at Teradiant Networks Inc., headquartered in San Jose, CA. He may be reached via the company's Web site at www.teradiant.com.