Optical transceivers for 16G Fibre Channel: Improving performance in storage-area networks

Cloud services and storage have exploded in popularity, enabled in part by virtualization technology at the server, the desktop, and in the storage-area network (SAN). For example, the multi-core processors used in today’s servers can manage a large number of data flows, and the memory speed of new storage technology, including solid-state disks (SSDs), can be used to create high-performance data center storage capable of running ever larger transaction-intensive applications.

However, the move towards virtualization can reveal interconnect or I/O bottlenecks. While network managers can circumvent such obstacles initially by simply deploying more bandwidth by multiplying host bus adaptors (HBAs) and adding switches, this strategy undoubtedly leads to network complexity and higher management costs.

With as much as 86% of server workloads being virtualized by 2018, storage equipment and components must keep pace with the need for I/O bandwidth in the SAN. Fibre Channel (FC) continues to be the dominant protocol used for networking virtualized servers to storage. An accelerating ramp of 8G FC deployment will soon give way to even faster 16G FC in director and top-of-rack storage switches, server HBAs, inter-switch links (ISLs), and FC RAID controllers.

16G FC, Physical Interface

The Fibre Channel FC-PI-5 “16G FC” standard completed in 2009 by the INCITS T11.2 Task Group defines the high-speed optical transceivers that will address the I/O bottlenecks that arise with increased channel utilization. These optical transceivers are compliant with small form pluggable (SFP) industry agreements INF-8074i (SFP) and SFF-8431 (SFP+) for mechanical and low-speed electrical specifications. They also are backwards compatible, which provides a simple migration path to better SAN performance.

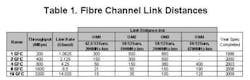

The standard defines 16G FC links that operate at a serial line rate of 14.025 Gbps. Thanks to a change in data encoding from the 8b/10b used in 8G FC to a more efficient 64b/66b, the throughput of a single optical interconnect doubles without the need to double the serial line rate. This more efficient encoding scheme enables the link distances needed in today’s data centers while still permitting the use of relatively inexpensive laser technology (Table 1). The standard defines the use of clock and data recovery (CDR) circuits to ensure good signal integrity and achieve link lengths that meet the physical needs of growing compute and storage installations.

August 2011 marked production availability of the first 16G FC SFP transceiver (Figure 1). The device incorporates onboard CDR functionality in both the transmit and receive directions and operates over link distances up to 125 m using 50/125-µm OM4 multimode optical fiber.

Figure 1. A 16G FC SFP transceiver is now in production.

16G FC SFP transceivers can operate at 14.025 Gbps as well as two legacy data rates of 8.5 Gbps (8G FC) and 4.25 Gbps (4G FC), easing the adoption of the new technology in existing SAN infrastructures. The transmit and receive path can operate at different data rates, as is often required during Fibre Channel speed negotiation. Automatic speed negotiation that requires no user intervention is the key to providing backwards compatibility with lower-speed storage devices.

SAN management made easier

The 16G FC SFP also offers digital diagnostic monitoring information (DMI) per the Small Form-Factor Agreement SFF-8472. This feature provides real-time monitoring information of the transceiver laser, receiver, and environmental conditions over a 2-wire serial interface (TWI).

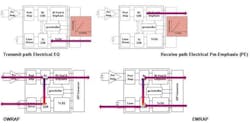

Some 16G FC SFP transceivers also offer additional features that ensure host interoperability and improve system fault isolation (Figure 2). For example, variable electrical input equalization (EQ) settings and electrical output pre-emphasis (PE) settings, controlled through the TWI interface, offer users additional flexibility in optimizing difficult system electrical channels. The system manager can select, in situ, the most appropriate SFP setting for a given interconnect port to optimize link performance.

Figure 2. Enhanced 16G FC SFP transceiver features.

To further assist in link optimization and remote troubleshooting, the user also can automatically configure the SFP to internally loop local traffic back to the host ASIC via the SFP electrical output (EWRAP). Similarly, the SFP can loop remotely generated optical data back to the port of origin via the SFP optical output (OWRAP).

EWRAP and OWRAP give system architects options for diagnosing storage networks and isolating potential fault locations. Both WRAP functions pass the signal through the internal CDR to remove signal degradation and jitter before retransmission. An optional pass-through function is also available to simultaneously pass the wrapped information through the module.

It starts with performance

The benefits of migrating to 16G FC start with performance. The higher-speed interconnects create faster data transfers that will ensure that highly demanding, data-intensive applications continue to have a simple migration path across the data center.

Migrating from 8G FC to the even faster and more efficient 16G FC optical links along with virtualized servers will reduce the total number of managed links/cables in the SAN. Link consolidation combined with the enhanced link diagnostic features available in the new SFPs will simplify network maintenance. Reducing the overall number of devices (switches/HBAs/etc.) can further reduce IT costs.

For large enterprise data centers or SANs, the improvement in watts per gigabit per second should not be overlooked. Migrating from 4G or 8G infrastructure to 16G FC can have a significant impact on electricity costs while helping to meet corporate “Green” targets.

Data center and SAN connectivity is a major focus area for optical product development initiatives, including high-density as well as high-speed serial modules. The new 16G FC SFPs will enable a new generation of high-density switches, ISLs, and HBAs. Looking ahead, the T11 Task Group is working on the next Fibre Channel speed, 32G FC (FC-PI-6).

New storage intensive applications and the growth of cloud computing and server virtualization will continue to drive bandwidth and convergence in the SAN/data center. This evolution opens up new opportunities for innovation.

Robert Hannah is applications manager and Randy Clark is product manager at Avago Technologies (www.avagotech.com).