Evolution of IXP Architectures in an Era of Open Networking Innovation

Internet exchange points (IXPs) play a key role in the internet ecosystem. Worldwide, there are more than 400 IXPs in over 100 countries, the largest of which carry peak data rates of almost 10 Tbps and connect hundreds of networks. IXPs offer a neutral shared switching fabric where clients can exchange traffic with one another once they have established peering connections. This means that the client value of an IXP increases with the number of clients connected to it.

Simply speaking, an internet exchange point can be regarded as a big Layer 2 (L2) switch. Each client network connecting to the IXP connects one or more of its routers to this switch via Ethernet interfaces. Routers from different networks can establish peering sessions by exchanging routing information via Border Gateway Protocol (BGP) and then send traffic across the Ethernet switch, which is transparent to this process. Please refer to Figure 1 for different peering methods.

IXPs allow operators to interconnect n client networks locally across their switch fabrics. Connectivity then scales with n (e.g., one 100-Gbps connection from each network to the switch fabric) rather than scaling with n² connections, as is the case when independent direct peering is used (e.g., one 10-Gbps connection to each of n peering partners). This leads to a flatter internet, improves bandwidth utilization, and reduces the cost and latency of interconnections, including in data center interconnect (DCI) applications. To avoid the cumbersome setup of bilateral peering sessions, most IXPs today operate route servers, which simplify peering by allowing IXP clients to peer with other networks via a single (multilateral) BGP session to a route server.

IXPs can be grouped into not-for-profit (e.g., industry associations, academic institutions, government agencies) and for-profit organizations. Their business models depend on regulation and other factors. Many European IXPs are not-for-profit organizations that rely, for example, on membership fees. In the U.S. most IXPs are for-profit organizations. It is important to understand that all IXP operators, while still providing public neutral peering services, may also provide commercial value-added services (VAS), such as security, access to cloud services, transport services, synchronization, caching, etc.

Over the past few years, content delivery networks (CDNs) have been major contributors to the traffic growth of IXPs. IXPs are critical infrastructure for CDNs to keep their transport costs under control. This is facilitated by putting content caches into the same locations as IXPs put their access switches. Often these locations are neutral colocation (colo) data centers (DCs).

Current IXP infrastructure

While early IXPs in the 1990s were based on Fiber Distributed Data Interface (FDDI) or Asynchronous Transfer Mode (ATM), today the standard interconnectivity service is based on Ethernet, as mentioned above. The L2 IXP switch fabric itself has also evolved from simple Ethernet switches in just one location, connected via a standard local area network, to Internet Protocol/Multiprotocol Label Switching (IP/MPLS) switches distributed over multiple sites, which require wide area network (WAN) connectivity over optical fiber. Utilizing an IP/MPLS switch fabric for the distributed L2 switching function provides better scalability and is more suitable for WAN connectivity. The distribution of the IXP switch fabric over several locations facilitates access for clients and improves resiliency. In most cases, these locations are in metro or regional areas, but extending the IXP fabric to a national or global scale is also possible.

Consequently, with more locations and increasing bandwidth, a flexible and scalable high-performance connectivity network becomes an important strategic asset for IXP operators. For larger IXPs, today’s locations are connected via high-capacity DWDM WAN links – typically n x 100 Gigabit Ethernet (GbE) today, with higher data rates like n x 400GbE in preparation. Client routers connect to the IXP switch fabric with Ethernet interfaces of 1/10/100GbE today and potentially 25GbE and 50GbE in the future.

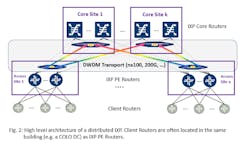

Figure 2 shows a high-level standard IP/MPLS architecture of a distributed IXP. Client routers connect to IXP provider edge (PE)routers at different sites (e.g., in colo DCs)via standard 1/10/100GbE interfaces. (Note: Depending on the business model, the colocation services may be provided by the IXP as VAS or may be provided by an independent colo DC provider.) The PE routers are connected to core provider (P) routers with high-capacity links, often n x 100GbE DWDM. Detailed architectures are highly customer specific and depend on many factors such as availability and ownership of optical fiber, topology, bandwidth, resiliency and latency requirements, etc.

It should be noted that although IP/MPLS-based L2 switch fabrics are mainly used today, there are alternative approaches such as Virtual Extensible Local Area Network (VXLAN) available that are based on more recent DC connectivity methods. It may well be that these methods, which do not change the basic architecture topology, will be deployed more often in the future.

It may also be worth mentioning that to provide better resiliency of the IXP infrastructure, especially for high-capacity interfaces such as 100GbE, photonic cross-connects (PXCs) are increasingly being used between client and PE routers. In case of failure or scheduled maintenance, the PXC can switch over from the client router to a backup PE router.

Innovation at IXPs: Disaggregation, SDN, NFV, network automation

Disaggregation, software-defined networking (SDN), network function virtualization (NFV), and network automation, as already applied in the big ICPs’ DC-centric networks, are now increasingly also being used in telco networks and IXPs. As IXP networks are typically more localized than telco networks and must cope with less legacy infrastructure and services, they may be an ideal place to introduce new networking concepts.

Disaggregation and openness speed innovation. Disaggregation, when applied to the IXP router and transport infrastructure, provides horizontal scalability, ensuring that even unexpected growth can be easily handled without the need for pre-planning of large chassis-based system capacity or forklift upgrades.

On top of that, in a disaggregated network, innovation can be driven very efficiently, as network functions are decoupled from each other and can evolve at their own speeds. This enables IXPs to introduce additional steps in interface capacity (e.g., 400GbE or 1 Terabit Ethernet) as well as single-chip switching capacities (12.8T, 25T, 50T per chip) and functionalities (e.g., programming protocol-independent packet processors [P4]) seamlessly. At the same time, the underlying electronic and photonic integration will drastically reduce power consumption and space requirements as well as the number of cables to be installed.

Openness breaks the dependency on a single vendor and enables network operators to leverage innovation from the whole industry, not just from a single supplier.

Disaggregating the DWDM layer: Open line system

The underlying optical layer combines the latest optical innovation and end-to-end physical layer automation with an open networking approach that seamlessly ties into a Transport SDN control layer. There are a number of industry forums and associations driving the vision of open application programming interfaces (APIs) and interworking further, including the Telecom Infra Project’s (TIP’s) Open Optical & Packet Transport project group, which is leading the alignment on information models, and the Open ROADM (reconfigurable optical add/drop multiplexer) Multi-Source Agreement project, as well as other standards development organizations such as the International Telecommunication Union’s Telecommunication Standardization Sector (ITU-T), which is working to ensure physical layer interworking.

In addition, advances in open optical transport system architectures are creating ultra-dense, ultra-efficient IXP applications, including innovative 1 rack unit (1RU) modular open transport platforms for cloud and data center networks that can be equipped as muxponder terminal systems and as open line system (OLS) optical layer platforms. Purpose-built for interconnectivity applications, these disaggregated platforms offer high density, flexibility, and low power consumption. Designed to meet the scalability requirements of network operators now and into the future, innovations in OLSs include a pay-as-you-grow disaggregated approach that enables the lowest startup costs, reduced equipment sparing costs, and cost-effective scalability.

Many IXPs are deploying open optical transport technology to scale capacity while reducing cost, floor space, and power consumption. Recent examples include the Moscow Internet Exchange (MS-IX), France-IX, Swiss-IX, ESpanix, Berlin Commercial Internet Exchange (BCIX), and the Stockholm Internet eXchange (STHIX).

Disaggregating the Router/Switch: White Boxes, Hardware-independent NOS, SDN, and VNFs

Router disaggregation is well established inside DCs. Instead of using large chassis-based routers, highly scalable leaf-spine switch fabrics are being built with white box L2/L3 switches and controlled by SDN. Using white boxes together with a hardware-independent and configurable network operating system (NOS) provides greater flexibility and enables IXP operators to select only those features that they really need.

Carrier-class disaggregated router/switch white boxes are distinguished by capabilities that include environmental hardening, enhanced synchronization, and high-availability features, with carrier-class NOSs that are hardware independent. To ensure the resiliency required, these platforms rely on proven and scalable IP/MPLS software capabilities and support for IP/MPLS and segment routing as well as data center-oriented protocols such as VXLAN and Ethernet Virtual Private Network (EVPN). Additional services such as security services that in the past may have required dedicated modules in the router chassis or standalone devices can be supported with third-party virtual network functions (VNFs) hosted on the white boxes or on standard x86 servers.

Packet switching functionality can be increased with P4. P4 can be used to program switches to determine how they process packets (i.e., define the headers and fields of the protocols that will need to be processed). This brings flexibility to hardware, enabling additional support for new protocols without waiting for new chips to be released or new versions of protocols to be specified, as with OpenFlow.

The comprehensive SDN/NFV management functionality enables IXP operators to introduce advanced features such as pay-per-use, sophisticated traffic engineering, or advanced blackholing for distributed denial of service (DDOS) mitigation.

Conclusion

IXPs are an integral and important part of the internet ecosystem. They provide a way for various networks to exchange traffic locally, resulting in a flatter and faster internet. To stay competitive, IXPs are also undergoing a transition from standard Ethernet/IP networks to cloud technologies, such as leaf-spine switching fabrics and DCI-style optical connectivity to reduce total cost of ownership, increase automation, and facilitate the offering of VAS in addition to basic peering services.

Harald Bock is vice president, network and technology strategy, at Infinera.